fleurs

收藏魔搭社区2026-01-09 更新2025-03-01 收录

下载链接:

https://modelscope.cn/datasets/google/fleurs

下载链接

链接失效反馈资源简介:

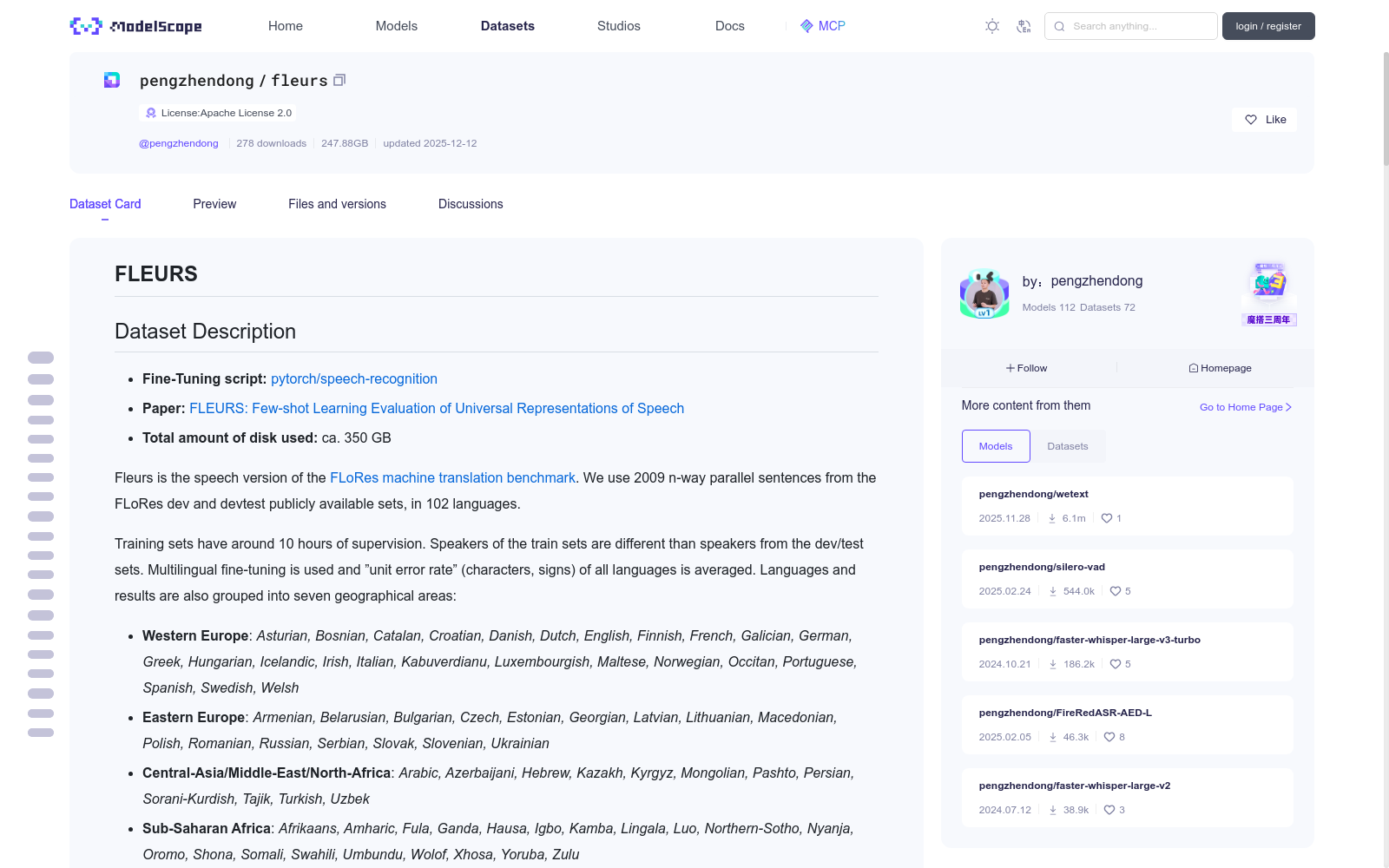

# FLEURS

## Dataset Description

- **Fine-Tuning script:** [pytorch/speech-recognition](https://github.com/huggingface/transformers/tree/main/examples/pytorch/speech-recognition)

- **Paper:** [FLEURS: Few-shot Learning Evaluation of

Universal Representations of Speech](https://arxiv.org/abs/2205.12446)

- **Total amount of disk used:** ca. 350 GB

Fleurs is the speech version of the [FLoRes machine translation benchmark](https://arxiv.org/abs/2106.03193).

We use 2009 n-way parallel sentences from the FLoRes dev and devtest publicly available sets, in 102 languages.

Training sets have around 10 hours of supervision. Speakers of the train sets are different than speakers from the dev/test sets. Multilingual fine-tuning is

used and ”unit error rate” (characters, signs) of all languages is averaged. Languages and results are also grouped into seven geographical areas:

- **Western Europe**: *Asturian, Bosnian, Catalan, Croatian, Danish, Dutch, English, Finnish, French, Galician, German, Greek, Hungarian, Icelandic, Irish, Italian, Kabuverdianu, Luxembourgish, Maltese, Norwegian, Occitan, Portuguese, Spanish, Swedish, Welsh*

- **Eastern Europe**: *Armenian, Belarusian, Bulgarian, Czech, Estonian, Georgian, Latvian, Lithuanian, Macedonian, Polish, Romanian, Russian, Serbian, Slovak, Slovenian, Ukrainian*

- **Central-Asia/Middle-East/North-Africa**: *Arabic, Azerbaijani, Hebrew, Kazakh, Kyrgyz, Mongolian, Pashto, Persian, Sorani-Kurdish, Tajik, Turkish, Uzbek*

- **Sub-Saharan Africa**: *Afrikaans, Amharic, Fula, Ganda, Hausa, Igbo, Kamba, Lingala, Luo, Northern-Sotho, Nyanja, Oromo, Shona, Somali, Swahili, Umbundu, Wolof, Xhosa, Yoruba, Zulu*

- **South-Asia**: *Assamese, Bengali, Gujarati, Hindi, Kannada, Malayalam, Marathi, Nepali, Oriya, Punjabi, Sindhi, Tamil, Telugu, Urdu*

- **South-East Asia**: *Burmese, Cebuano, Filipino, Indonesian, Javanese, Khmer, Lao, Malay, Maori, Thai, Vietnamese*

- **CJK languages**: *Cantonese and Mandarin Chinese, Japanese, Korean*

## How to use & Supported Tasks

### How to use

The `datasets` library allows you to load and pre-process your dataset in pure Python, at scale. The dataset can be downloaded and prepared in one call to your local drive by using the `load_dataset` function.

For example, to download the Hindi config, simply specify the corresponding language config name (i.e., "hi_in" for Hindi):

```python

from datasets import load_dataset

fleurs = load_dataset("google/fleurs", "hi_in", split="train")

```

Using the datasets library, you can also stream the dataset on-the-fly by adding a `streaming=True` argument to the `load_dataset` function call. Loading a dataset in streaming mode loads individual samples of the dataset at a time, rather than downloading the entire dataset to disk.

```python

from datasets import load_dataset

fleurs = load_dataset("google/fleurs", "hi_in", split="train", streaming=True)

print(next(iter(fleurs)))

```

*Bonus*: create a [PyTorch dataloader](https://huggingface.co/docs/datasets/use_with_pytorch) directly with your own datasets (local/streamed).

Local:

```python

from datasets import load_dataset

from torch.utils.data.sampler import BatchSampler, RandomSampler

fleurs = load_dataset("google/fleurs", "hi_in", split="train")

batch_sampler = BatchSampler(RandomSampler(fleurs), batch_size=32, drop_last=False)

dataloader = DataLoader(fleurs, batch_sampler=batch_sampler)

```

Streaming:

```python

from datasets import load_dataset

from torch.utils.data import DataLoader

fleurs = load_dataset("google/fleurs", "hi_in", split="train")

dataloader = DataLoader(fleurs, batch_size=32)

```

To find out more about loading and preparing audio datasets, head over to [hf.co/blog/audio-datasets](https://huggingface.co/blog/audio-datasets).

### Example scripts

Train your own CTC or Seq2Seq Automatic Speech Recognition models on FLEURS with `transformers` - [here](https://github.com/huggingface/transformers/tree/main/examples/pytorch/speech-recognition).

Fine-tune your own Language Identification models on FLEURS with `transformers` - [here](https://github.com/huggingface/transformers/tree/main/examples/pytorch/audio-classification)

### 1. Speech Recognition (ASR)

```py

from datasets import load_dataset

fleurs_asr = load_dataset("google/fleurs", "af_za") # for Afrikaans

# to download all data for multi-lingual fine-tuning uncomment following line

# fleurs_asr = load_dataset("google/fleurs", "all")

# see structure

print(fleurs_asr)

# load audio sample on the fly

audio_input = fleurs_asr["train"][0]["audio"] # first decoded audio sample

transcription = fleurs_asr["train"][0]["transcription"] # first transcription

# use `audio_input` and `transcription` to fine-tune your model for ASR

# for analyses see language groups

all_language_groups = fleurs_asr["train"].features["lang_group_id"].names

lang_group_id = fleurs_asr["train"][0]["lang_group_id"]

all_language_groups[lang_group_id]

```

### 2. Language Identification

LangID can often be a domain classification, but in the case of FLEURS-LangID, recordings are done in a similar setting across languages and the utterances correspond to n-way parallel sentences, in the exact same domain, making this task particularly relevant for evaluating LangID. The setting is simple, FLEURS-LangID is splitted in train/valid/test for each language. We simply create a single train/valid/test for LangID by merging all.

```py

from datasets import load_dataset

fleurs_langID = load_dataset("google/fleurs", "all") # to download all data

# see structure

print(fleurs_langID)

# load audio sample on the fly

audio_input = fleurs_langID["train"][0]["audio"] # first decoded audio sample

language_class = fleurs_langID["train"][0]["lang_id"] # first id class

language = fleurs_langID["train"].features["lang_id"].names[language_class]

# use audio_input and language_class to fine-tune your model for audio classification

```

### 3. Retrieval

Retrieval provides n-way parallel speech and text data. Similar to how XTREME for text leverages Tatoeba to evaluate bitext mining a.k.a sentence translation retrieval, we use Retrieval to evaluate the quality of fixed-size representations of speech utterances. Our goal is to incentivize the creation of fixed-size speech encoder for speech retrieval. The system has to retrieve the English "key" utterance corresponding to the speech translation of "queries" in 15 languages. Results have to be reported on the test sets of Retrieval whose utterances are used as queries (and keys for English). We augment the English keys with a large number of utterances to make the task more difficult.

```py

from datasets import load_dataset

fleurs_retrieval = load_dataset("google/fleurs", "af_za") # for Afrikaans

# to download all data for multi-lingual fine-tuning uncomment following line

# fleurs_retrieval = load_dataset("google/fleurs", "all")

# see structure

print(fleurs_retrieval)

# load audio sample on the fly

audio_input = fleurs_retrieval["train"][0]["audio"] # decoded audio sample

text_sample_pos = fleurs_retrieval["train"][0]["transcription"] # positive text sample

text_sample_neg = fleurs_retrieval["train"][1:20]["transcription"] # negative text samples

# use `audio_input`, `text_sample_pos`, and `text_sample_neg` to fine-tune your model for retrieval

```

Users can leverage the training (and dev) sets of FLEURS-Retrieval with a ranking loss to build better cross-lingual fixed-size representations of speech.

## Dataset Structure

We show detailed information the example configurations `af_za` of the dataset.

All other configurations have the same structure.

### Data Instances

**af_za**

- Size of downloaded dataset files: 1.47 GB

- Size of the generated dataset: 1 MB

- Total amount of disk used: 1.47 GB

An example of a data instance of the config `af_za` looks as follows:

```

{'id': 91,

'num_samples': 385920,

'path': '/home/patrick/.cache/huggingface/datasets/downloads/extracted/310a663d52322700b3d3473cbc5af429bd92a23f9bc683594e70bc31232db39e/home/vaxelrod/FLEURS/oss2_obfuscated/af_za/audio/train/17797742076841560615.wav',

'audio': {'path': '/home/patrick/.cache/huggingface/datasets/downloads/extracted/310a663d52322700b3d3473cbc5af429bd92a23f9bc683594e70bc31232db39e/home/vaxelrod/FLEURS/oss2_obfuscated/af_za/audio/train/17797742076841560615.wav',

'array': array([ 0.0000000e+00, 0.0000000e+00, 0.0000000e+00, ...,

-1.1205673e-04, -8.4638596e-05, -1.2731552e-04], dtype=float32),

'sampling_rate': 16000},

'raw_transcription': 'Dit is nog nie huidiglik bekend watter aantygings gemaak sal word of wat owerhede na die seun gelei het nie maar jeugmisdaad-verrigtinge het in die federale hof begin',

'transcription': 'dit is nog nie huidiglik bekend watter aantygings gemaak sal word of wat owerhede na die seun gelei het nie maar jeugmisdaad-verrigtinge het in die federale hof begin',

'gender': 0,

'lang_id': 0,

'language': 'Afrikaans',

'lang_group_id': 3}

```

### Data Fields

The data fields are the same among all splits.

- **id** (int): ID of audio sample

- **num_samples** (int): Number of float values

- **path** (str): Path to the audio file

- **audio** (dict): Audio object including loaded audio array, sampling rate and path ot audio

- **raw_transcription** (str): The non-normalized transcription of the audio file

- **transcription** (str): Transcription of the audio file

- **gender** (int): Class id of gender

- **lang_id** (int): Class id of language

- **lang_group_id** (int): Class id of language group

### Data Splits

Every config only has the `"train"` split containing of *ca.* 1000 examples, and a `"validation"` and `"test"` split each containing of *ca.* 400 examples.

## Dataset Creation

We collect between one and three recordings for each sentence (2.3 on average), and buildnew train-dev-test splits with 1509, 150 and 350 sentences for

train, dev and test respectively.

## Considerations for Using the Data

### Social Impact of Dataset

This dataset is meant to encourage the development of speech technology in a lot more languages of the world. One of the goal is to give equal access to technologies like speech recognition or speech translation to everyone, meaning better dubbing or better access to content from the internet (like podcasts, streaming or videos).

### Discussion of Biases

Most datasets have a fair distribution of gender utterances (e.g. the newly introduced FLEURS dataset). While many languages are covered from various regions of the world, the benchmark misses many languages that are all equally important. We believe technology built through FLEURS should generalize to all languages.

### Other Known Limitations

The dataset has a particular focus on read-speech because common evaluation benchmarks like CoVoST-2 or LibriSpeech evaluate on this type of speech. There is sometimes a known mismatch between performance obtained in a read-speech setting and a more noisy setting (in production for instance). Given the big progress that remains to be made on many languages, we believe better performance on FLEURS should still correlate well with actual progress made for speech understanding.

## Additional Information

All datasets are licensed under the [Creative Commons license (CC-BY)](https://creativecommons.org/licenses/).

### Citation Information

You can access the FLEURS paper at https://arxiv.org/abs/2205.12446.

Please cite the paper when referencing the FLEURS corpus as:

```

@article{fleurs2022arxiv,

title = {FLEURS: Few-shot Learning Evaluation of Universal Representations of Speech},

author = {Conneau, Alexis and Ma, Min and Khanuja, Simran and Zhang, Yu and Axelrod, Vera and Dalmia, Siddharth and Riesa, Jason and Rivera, Clara and Bapna, Ankur},

journal={arXiv preprint arXiv:2205.12446},

url = {https://arxiv.org/abs/2205.12446},

year = {2022},

```

### Contributions

Thanks to [@patrickvonplaten](https://github.com/patrickvonplaten) and [@aconneau](https://github.com/aconneau) for adding this dataset.

# FLEURS

## 数据集描述

- **微调脚本**:[pytorch/语音识别](https://github.com/huggingface/transformers/tree/main/examples/pytorch/speech-recognition)

- **论文**:[FLEURS:语音通用表征的少样本学习评估](https://arxiv.org/abs/2205.12446)

- **总磁盘占用**:约350 GB

FLEURS是[FLoRes机器翻译基准](https://arxiv.org/abs/2106.03193)的语音版本。我们从FLoRes的开发集与开发测试公开集中选取了102种语言的2009条多向平行语句。

训练集包含约10小时的标注数据,训练集的说话人与开发/测试集的说话人互不重合。实验采用多语言微调方式,并对所有语言的「单位错误率(unit error rate,字符、符号)」取平均值。同时将语言与实验结果划分为七个地理区域:

- **西欧**:阿斯图里亚斯语、波斯尼亚语、加泰罗尼亚语、克罗地亚语、丹麦语、荷兰语、英语、芬兰语、法语、加利西亚语、德语、希腊语、匈牙利语、冰岛语、爱尔兰语、意大利语、佛得角克里奥尔语、卢森堡语、马耳他语、挪威语、奥克语、葡萄牙语、西班牙语、瑞典语、威尔士语

- **东欧**:亚美尼亚语、白俄罗斯语、保加利亚语、捷克语、爱沙尼亚语、格鲁吉亚语、拉脱维亚语、立陶宛语、马其顿语、波兰语、罗马尼亚语、俄语、塞尔维亚语、斯洛伐克语、斯洛文尼亚语、乌克兰语

- **中亚/中东/北非**:阿拉伯语、阿塞拜疆语、希伯来语、哈萨克语、吉尔吉斯语、蒙古语、普什图语、波斯语、索拉尼库尔德语、塔吉克语、土耳其语、乌兹别克语

- **撒哈拉以南非洲**:南非荷兰语、阿姆哈拉语、富拉语、干达人语、豪萨语、伊博语、坎巴语、林加拉语、卢奥语、北索托语、齐切瓦语、奥罗莫语、绍纳语、索马里语、斯瓦希里语、温本杜语、沃洛夫语、科萨语、约鲁巴语、祖鲁语

- **南亚**:阿萨姆语、孟加拉语、古吉拉特语、印地语、卡纳达语、马拉雅拉姆语、马拉地语、尼泊尔语、奥里亚语、旁遮普语、信德语、泰米尔语、泰卢固语、乌尔都语

- **东南亚**:缅甸语、宿务语、他加禄语、印尼语、爪哇语、高棉语、老挝语、马来语、毛利语、泰语、越南语

- **CJK语言**:粤语与普通话、日语、韩语

## 使用方法与支持任务

### 使用方法

`datasets`库支持使用纯Python规模化加载与预处理数据集。你可通过调用`load_dataset`函数,将数据集一键下载并准备至本地磁盘。

例如,要下载印地语配置,只需指定对应的语言配置名称(印地语对应`"hi_in"`):

python

from datasets import load_dataset

fleurs = load_dataset("google/fleurs", "hi_in", split="train")

通过`datasets`库,你还可向`load_dataset`函数调用添加`streaming=True`参数,实现流式实时加载数据集。流式加载模式会单次加载数据集的单个样本,而非将整个数据集下载至磁盘:

python

from datasets import load_dataset

fleurs = load_dataset("google/fleurs", "hi_in", split="train", streaming=True)

print(next(iter(fleurs)))

*拓展*:可直接为你的自定义数据集(本地/流式)创建[PyTorch数据加载器](https://huggingface.co/docs/datasets/use_with_pytorch)。

本地模式:

python

from datasets import load_dataset

from torch.utils.data.sampler import BatchSampler, RandomSampler

fleurs = load_dataset("google/fleurs", "hi_in", split="train")

batch_sampler = BatchSampler(RandomSampler(fleurs), batch_size=32, drop_last=False)

dataloader = DataLoader(fleurs, batch_sampler=batch_sampler)

流式模式:

python

from datasets import load_dataset

from torch.utils.data import DataLoader

fleurs = load_dataset("google/fleurs", "hi_in", split="train")

dataloader = DataLoader(fleurs, batch_size=32)

想要了解更多关于加载与预处理音频数据集的内容,请访问[hf.co/blog/audio-datasets](https://huggingface.co/blog/audio-datasets)。

### 示例脚本

使用`transformers`在FLEURS上训练自定义的连接时序分类(CTC)或序列到序列(Seq2Seq)自动语音识别模型——[点击此处](https://github.com/huggingface/transformers/tree/main/examples/pytorch/speech-recognition)。

使用`transformers`在FLEURS上微调自定义的语言识别模型——[点击此处](https://github.com/huggingface/transformers/tree/main/examples/pytorch/audio-classification)

### 1. 自动语音识别(ASR)

py

from datasets import load_dataset

fleurs_asr = load_dataset("google/fleurs", "af_za") # 加载南非荷兰语配置

# 若要下载所有语言数据以进行多语言微调,请取消下一行注释

# fleurs_asr = load_dataset("google/fleurs", "all")

# 查看数据集结构

print(fleurs_asr)

# 实时加载音频样本

audio_input = fleurs_asr["train"][0]["audio"] # 首个解码后的音频样本

transcription = fleurs_asr["train"][0]["transcription"] # 首个转录文本

# 使用`audio_input`与`transcription`微调你的自动语音识别模型

# 可按语言组进行分析

all_language_groups = fleurs_asr["train"].features["lang_group_id"].names

lang_group_id = fleurs_asr["train"][0]["lang_group_id"]

all_language_groups[lang_group_id]

### 2. 语言识别

语言识别通常可视为领域分类任务,但在FLEURS-语言识别任务中,所有语言的录音采集场景一致,且语音片段均为同领域的多向平行语句,这使得该任务特别适合用于评估语言识别模型。任务设置十分简单:FLEURS-语言识别会为每种语言划分训练/验证/测试集。我们将所有语言的对应集合合并,得到一个统一的语言识别任务训练/验证/测试集。

py

from datasets import load_dataset

fleurs_langID = load_dataset("google/fleurs", "all") # 下载所有语言数据

# 查看数据集结构

print(fleurs_langID)

# 实时加载音频样本

audio_input = fleurs_langID["train"][0]["audio"] # 首个解码后的音频样本

language_class = fleurs_langID["train"][0]["lang_id"] # 首个语言类别ID

language = fleurs_langID["train"].features["lang_id"].names[language_class]

# 使用audio_input与language_class微调你的音频分类模型

### 3. 检索任务

检索任务提供多向平行的语音与文本数据。与文本基准XTREME利用Tatoeba评估比特挖掘(即句子翻译检索)的方式类似,我们使用检索任务评估语音片段的固定尺寸表征质量。我们的目标是推动用于语音检索的固定尺寸语音编码器的研发。该任务要求系统检索与15种语言的「查询」语音翻译对应的英语「关键」语音片段。检索任务的测试集采用这些语音片段作为查询(英语片段则作为关键样本),相关结果需在此测试集上报告。我们为英语关键样本添加了大量额外语音片段,以提升任务难度。

py

from datasets import load_dataset

fleurs_retrieval = load_dataset("google/fleurs", "af_za") # 加载南非荷兰语配置

# 若要下载所有语言数据以进行多语言微调,请取消下一行注释

# fleurs_retrieval = load_dataset("google/fleurs", "all")

# 查看数据集结构

print(fleurs_retrieval)

# 实时加载音频样本

audio_input = fleurs_retrieval["train"][0]["audio"] # 解码后的音频样本

text_sample_pos = fleurs_retrieval["train"][0]["transcription"] # 正样本文本

text_sample_neg = fleurs_retrieval["train"][1:20]["transcription"] # 负样本文本

# 使用`audio_input`、`text_sample_pos`与`text_sample_neg`微调你的检索模型

用户可利用FLEURS-检索任务的训练(及开发)集结合排序损失,构建更优质的跨语言固定尺寸语音表征。

## 数据集结构

我们以示例配置`af_za`为例展示数据集的详细信息,其他所有配置的结构均与此一致。

### 数据样本

**af_za**

- 下载的数据集文件大小:1.47 GB

- 生成的数据集大小:1 MB

- 总磁盘占用:1.47 GB

`af_za`配置下的单条数据样本示例如下:

{'id': 91,

'num_samples': 385920,

'path': '/home/patrick/.cache/huggingface/datasets/downloads/extracted/310a663d52322700b3d3473cbc5af429bd92a23f9bc683594e70bc31232db39e/home/vaxelrod/FLEURS/oss2_obfuscated/af_za/audio/train/17797742076841560615.wav',

'audio': {'path': '/home/patrick/.cache/huggingface/datasets/downloads/extracted/310a663d52322700b3d3473cbc5af429bd92a23f9bc683594e70bc31232db39e/home/vaxelrod/FLEURS/oss2_obfuscated/af_za/audio/train/17797742076841560615.wav',

'array': array([ 0.0000000e+00, 0.0000000e+00, 0.0000000e+00, ...,

-1.1205673e-04, -8.4638596e-05, -1.2731552e-04], dtype=float32),

'sampling_rate': 16000},

'raw_transcription': 'Dit is nog nie huidiglik bekend watter aantygings gemaak sal word of wat owerhede na die seun gelei het nie maar jeugmisdaad-verrigtinge het in die federale hof begin',

'transcription': 'dit is nog nie huidiglik bekend watter aantygings gemaak sal word of wat owerhede na die seun gelei het nie maar jeugmisdaad-verrigtinge het in die federale hof begin',

'gender': 0,

'lang_id': 0,

'language': 'Afrikaans',

'lang_group_id': 3}

### 数据字段

所有拆分下的数据字段均保持一致:

- **id** (int):音频样本的唯一标识

- **num_samples** (int):浮点数值的数量

- **path** (str):音频文件的存储路径

- **audio** (dict):音频对象,包含加载后的音频数组、采样率及音频路径

- **raw_transcription** (str):音频文件的非标准化转录文本

- **transcription** (str):音频文件的标准化转录文本

- **gender** (int):性别的类别ID

- **lang_id** (int):语言的类别ID

- **lang_group_id** (int):语言组的类别ID

### 数据拆分

每个配置仅包含`"train"`拆分(约含1000条样本),以及`"validation"`与`"test"`拆分(各约含400条样本)。

## 数据集构建

我们为每条语句收集1至3条录音(平均每条2.3条),并按照训练集1509条、开发集150条、测试集350条的比例构建全新的训练-开发-测试拆分。

## 数据集使用注意事项

### 数据集的社会影响

本数据集旨在推动全球更多语言的语音技术发展,其中一个目标是让人人都能平等使用语音识别、语音翻译等技术,例如实现更优质的配音效果,或是让用户更便捷地访问互联网内容(如播客、流媒体与视频)。

### 偏差讨论

大多数数据集的性别语音样本分布相对均衡(如本次新增的FLEURS数据集)。尽管该基准覆盖了全球多个地区的众多语言,但仍有大量同等重要的语言未被纳入。我们认为,基于FLEURS研发的技术应当能够泛化至所有语言。

### 其他已知局限

由于CoVoST-2、LibriSpeech等主流评估基准均采用朗读语音作为测试数据,本数据集也主要聚焦于朗读语音。朗读语音场景下的模型性能,与实际生产环境中的嘈杂场景性能有时会存在已知偏差。考虑到许多语言的语音技术仍有较大进步空间,我们认为在FLEURS上取得的性能提升,仍应与语音理解的实际进展具备相关性。

## 补充信息

所有数据集均采用[知识共享署名许可协议(CC-BY)](https://creativecommons.org/licenses/)授权。

### 引用信息

你可通过https://arxiv.org/abs/2205.12446访问FLEURS论文。引用FLEURS语料库时,请按以下格式标注论文:

@article{fleurs2022arxiv,

title = {FLEURS: Few-shot Learning Evaluation of Universal Representations of Speech},

author = {Conneau, Alexis and Ma, Min and Khanuja, Simran and Zhang, Yu and Axelrod, Vera and Dalmia, Siddharth and Riesa, Jason and Rivera, Clara and Bapna, Ankur},

journal={arXiv preprint arXiv:2205.12446},

url = {https://arxiv.org/abs/2205.12446},

year = {2022},

### 贡献致谢

感谢[@patrickvonplaten](https://github.com/patrickvonplaten)与[@aconneau](https://github.com/aconneau)贡献本数据集。

提供机构:

maas

创建时间:

2025-04-21

AI搜集汇总

数据集介绍

背景与挑战

背景概述

FLEURS数据集是FLoRes机器翻译基准的语音版本,包含102种语言的2009个n-way平行句子,训练集约10小时,支持多语言语音识别、语言识别和检索任务。数据集将语言分为七个地理区域,总大小约350GB,适用于评估语音表示和促进全球语音技术的发展。

以上内容由AI搜集并总结生成