garage-bAInd/Open-Platypus

收藏Hugging Face2024-01-24 更新2024-03-04 收录

下载链接:

https://hf-mirror.com/datasets/garage-bAInd/Open-Platypus

下载链接

链接失效反馈资源简介:

---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

- name: instruction

dtype: string

- name: data_source

dtype: string

splits:

- name: train

num_bytes: 30776452

num_examples: 24926

download_size: 15565850

dataset_size: 30776452

language:

- en

size_categories:

- 10K<n<100K

---

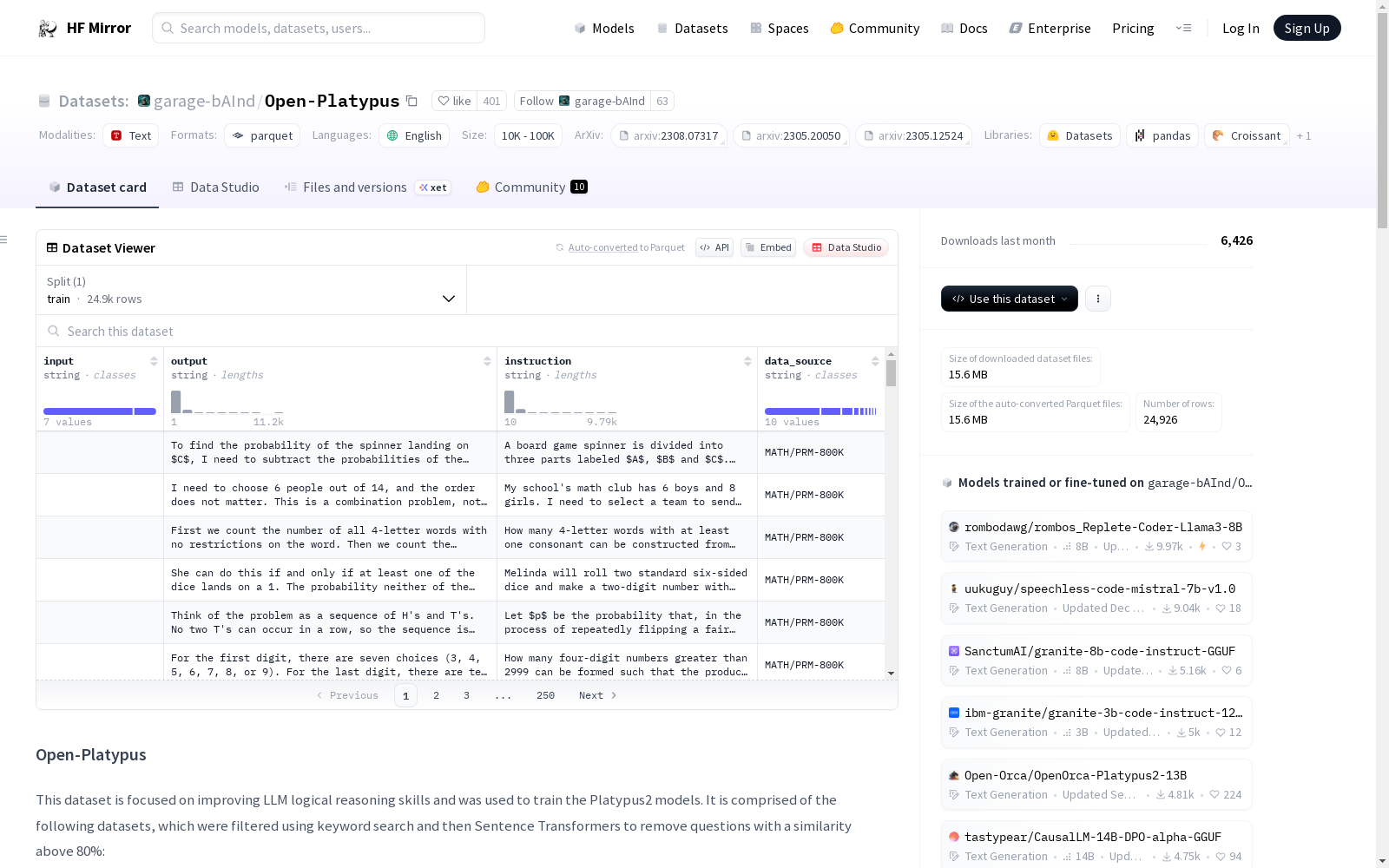

# Open-Platypus

This dataset is focused on improving LLM logical reasoning skills and was used to train the Platypus2 models. It is comprised of the following datasets, which were filtered using keyword search and then Sentence Transformers to remove questions with a similarity above 80%:

| Dataset Name | License Type |

|--------------------------------------------------------------|--------------|

| [PRM800K](https://github.com/openai/prm800k) | MIT |

| [MATH](https://github.com/hendrycks/math) | MIT |

| [ScienceQA](https://github.com/lupantech/ScienceQA) | [Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International](https://creativecommons.org/licenses/by-nc-sa/4.0/) |

| [SciBench](https://github.com/mandyyyyii/scibench) | MIT |

| [ReClor](https://whyu.me/reclor/) | Non-commercial |

| [TheoremQA](https://huggingface.co/datasets/wenhu/TheoremQA) | MIT |

| [`nuprl/leetcode-solutions-python-testgen-gpt4`](https://huggingface.co/datasets/nuprl/leetcode-solutions-python-testgen-gpt4/viewer/nuprl--leetcode-solutions-python-testgen-gpt4/train?p=1) | None listed |

| [`jondurbin/airoboros-gpt4-1.4.1`](https://huggingface.co/datasets/jondurbin/airoboros-gpt4-1.4.1) | other |

| [`TigerResearch/tigerbot-kaggle-leetcodesolutions-en-2k`](https://huggingface.co/datasets/TigerResearch/tigerbot-kaggle-leetcodesolutions-en-2k/viewer/TigerResearch--tigerbot-kaggle-leetcodesolutions-en-2k/train?p=2) | apache-2.0 |

| [ARB](https://arb.duckai.org) | CC BY 4.0 |

| [`timdettmers/openassistant-guanaco`](https://huggingface.co/datasets/timdettmers/openassistant-guanaco) | apache-2.0 |

## Data Contamination Check

We've removed approximately 200 questions that appear in the Hugging Face benchmark test sets. Please see our [paper](https://arxiv.org/abs/2308.07317) and [project webpage](https://platypus-llm.github.io) for additional information.

## Model Info

Please see models at [`garage-bAInd`](https://huggingface.co/garage-bAInd).

## Training and filtering code

Please see the [Platypus GitHub repo](https://github.com/arielnlee/Platypus).

## Citations

```bibtex

@article{platypus2023,

title={Platypus: Quick, Cheap, and Powerful Refinement of LLMs},

author={Ariel N. Lee and Cole J. Hunter and Nataniel Ruiz},

booktitle={arXiv preprint arxiv:2308.07317},

year={2023}

}

```

```bibtex

@article{lightman2023lets,

title={Let's Verify Step by Step},

author={Lightman, Hunter and Kosaraju, Vineet and Burda, Yura and Edwards, Harri and Baker, Bowen and Lee, Teddy and Leike, Jan and Schulman, John and Sutskever, Ilya and Cobbe, Karl},

journal={preprint arXiv:2305.20050},

year={2023}

}

```

```bibtex

@inproceedings{lu2022learn,

title={Learn to Explain: Multimodal Reasoning via Thought Chains for Science Question Answering},

author={Lu, Pan and Mishra, Swaroop and Xia, Tony and Qiu, Liang and Chang, Kai-Wei and Zhu, Song-Chun and Tafjord, Oyvind and Clark, Peter and Ashwin Kalyan},

booktitle={The 36th Conference on Neural Information Processing Systems (NeurIPS)},

year={2022}

}

```

```bibtex

@misc{wang2023scibench,

title={SciBench: Evaluating College-Level Scientific Problem-Solving Abilities of Large Language Models},

author={Xiaoxuan Wang and Ziniu Hu and Pan Lu and Yanqiao Zhu and Jieyu Zhang and Satyen Subramaniam and Arjun R. Loomba and Shichang Zhang and Yizhou Sun and Wei Wang},

year={2023},

arXiv eprint 2307.10635

}

```

```bibtex

@inproceedings{yu2020reclor,

author = {Yu, Weihao and Jiang, Zihang and Dong, Yanfei and Feng, Jiashi},

title = {ReClor: A Reading Comprehension Dataset Requiring Logical Reasoning},

booktitle = {International Conference on Learning Representations (ICLR)},

month = {April},

year = {2020}

}

```

```bibtex

@article{chen2023theoremqa,

title={TheoremQA: A Theorem-driven Question Answering dataset},

author={Chen, Wenhu and Ming Yin, Max Ku, Elaine Wan, Xueguang Ma, Jianyu Xu, Tony Xia, Xinyi Wang, Pan Lu},

journal={preprint arXiv:2305.12524},

year={2023}

}

```

```bibtex

@article{hendrycksmath2021,

title={Measuring Mathematical Problem Solving With the MATH Dataset},

author={Dan Hendrycks and Collin Burns and Saurav Kadavath and Akul Arora and Steven Basart and Eric Tang and Dawn Song and Jacob Steinhardt},

journal={NeurIPS},

year={2021}

}

```

```bibtex

@misc{sawada2023arb,

title={ARB: Advanced Reasoning Benchmark for Large Language Models},

author={Tomohiro Sawada and Daniel Paleka and Alexander Havrilla and Pranav Tadepalli and Paula Vidas and Alexander Kranias and John J. Nay and Kshitij Gupta and Aran Komatsuzaki},

arXiv eprint 2307.13692,

year={2023}

}

```

提供机构:

garage-bAInd

原始信息汇总

数据集概述

数据集基本信息

- 数据集名称: Open-Platypus

- 数据集大小:

- 下载大小: 15565850字节

- 数据集大小: 30776452字节

- 语言: 英语(en)

- 大小类别: 10K<n<100K

数据集结构

- 配置:

- 默认配置:

- 数据文件:

- 分割: train

- 路径: data/train-*

- 数据文件:

- 默认配置:

- 数据集信息:

- 特征:

- input: 数据类型为字符串

- output: 数据类型为字符串

- instruction: 数据类型为字符串

- data_source: 数据类型为字符串

- 分割:

- train:

- 字节数: 30776452

- 示例数: 24926

- train:

- 特征:

数据集来源

- 组成数据集:

- PRM800K

- MATH

- ScienceQA

- SciBench

- ReClor

- TheoremQA

- nuprl/leetcode-solutions-python-testgen-gpt4

- jondurbin/airoboros-gpt4-1.4.1

- TigerResearch/tigerbot-kaggle-leetcodesolutions-en-2k

- ARB

- timdettmers/openassistant-guanaco

数据集用途

- 目的: 用于提升LLM(大型语言模型)的逻辑推理技能,特别是用于训练Platypus2模型。

- 数据处理: 通过关键词搜索和Sentence Transformers过滤,移除相似度超过80%的问题。

数据集清理

- 清理措施: 移除了约200个出现在Hugging Face基准测试集中的问题。

数据集引用

- 引用文献:

- Platypus: Quick, Cheap, and Powerful Refinement of LLMs

- Lets Verify Step by Step

- Learn to Explain: Multimodal Reasoning via Thought Chains for Science Question Answering

- SciBench: Evaluating College-Level Scientific Problem-Solving Abilities of Large Language Models

- ReClor: A Reading Comprehension Dataset Requiring Logical Reasoning

- TheoremQA: A Theorem-driven Question Answering dataset

- Measuring Mathematical Problem Solving With the MATH Dataset

- ARB: Advanced Reasoning Benchmark for Large Language Models

搜集汇总

数据集介绍

构建方式

Open-Platypus数据集的构建,旨在提升大型语言模型在逻辑推理方面的能力。该数据集通过关键词搜索对多个来源的数据集进行筛选,并利用Sentence Transformers技术移除相似度高于80%的问题,从而确保了数据的质量和多样性。数据集包括PRM800K、MATH、ScienceQA等多个领域的数据,经过严格的过滤和处理,最终形成了具有24926个训练样本的数据集。

特点

该数据集的特点在于其专注于逻辑推理任务的训练,涵盖了数学、科学等多个领域的复杂问题。它不仅包含了问题及答案,还提供了指令和数据来源等信息,这为模型训练提供了丰富的上下文。此外,数据集还经过了数据污染检查,移除了在Hugging Face基准测试集中出现的问题,保证了数据的独立性和公正性。

使用方法

使用Open-Platypus数据集时,用户可以访问Hugging Face提供的平台进行下载。该数据集适用于大型语言模型的训练,特别是针对逻辑推理能力的提升。用户可以根据数据集提供的特征,如输入、输出、指令和数据来源等,设计相应的训练策略。同时,数据集的构建和处理代码可在Platypus的GitHub仓库中找到,方便用户进行复现和进一步的研究。

背景与挑战

背景概述

Open-Platypus数据集,致力于提升大型语言模型(LLM)的逻辑推理能力,其研究成果显著,被广泛应用于Platypus2模型的训练之中。该数据集由Ariel N. Lee、Cole J. Hunter和Nataniel Ruiz等研究人员于2023年创建,整合了多个领域的数据集,如PRM800K、MATH、ScienceQA等,通过关键词搜索及Sentence Transformers技术筛选出相似度低于80%的问题,以增强模型的推理能力。该数据集的构建,对提升LLM在逻辑推理方面的性能具有深远影响,为相关领域的研究提供了宝贵资源。

当前挑战

Open-Platypus数据集在构建过程中面临的挑战主要包括:确保数据质量的一致性,尤其是在整合多个来源的数据时;处理数据污染问题,例如移除在Hugging Face基准测试集中出现的约200个问题;以及提高数据集的代表性,使其能够全面覆盖逻辑推理的各个方面。在研究领域问题方面,该数据集旨在解决LLM在逻辑推理任务中的局限性,如数学问题解决、科学问题解答等,这些任务对模型的逻辑推理能力提出了更高的要求。

常用场景

经典使用场景

在逻辑推理与数学问题解决领域,Open-Platypus数据集的显著应用在于训练大型语言模型以提升其逻辑推理能力。该数据集整合了多个子数据集,通过精心的筛选与清洗,为模型提供了丰富且多样化的训练材料,从而使得模型能够在数学与科学问题解决任务中表现出更为优异的性能。

衍生相关工作

基于Open-Platypus数据集的研究成果,已经衍生出一系列相关的工作。这些研究不仅涉及对现有模型的改进,还包括了对数据集本身的进一步探索与扩展,以及在此基础上开发的新的评估指标和算法。这些工作共同推动了逻辑推理领域的研究进展,为未来的技术发展奠定了坚实的基础。

数据集最近研究

最新研究方向

在当前自然语言处理领域,Open-Platypus数据集致力于提升大型语言模型(LLM)的逻辑推理能力,其研究方向的焦点在于对Platypus2模型进行训练。此数据集的构建是通过关键词搜索和Sentence Transformers对相似度高于80%的问题进行筛选,整合了包括PRM800K、MATH、ScienceQA等多个领域的数据集。近期研究利用该数据集在逻辑推理任务上取得了显著进展,为大型语言模型在数学问题解决、科学知识问答等领域的应用提供了新的视角和方法。

以上内容由遇见数据集搜集并总结生成