deepmind/pg19

收藏Hugging Face2024-01-18 更新2024-05-25 收录

下载链接:

https://hf-mirror.com/datasets/deepmind/pg19

下载链接

链接失效反馈资源简介:

---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- apache-2.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- text-generation

task_ids:

- language-modeling

paperswithcode_id: pg-19

pretty_name: PG-19

dataset_info:

features:

- name: short_book_title

dtype: string

- name: publication_date

dtype: int32

- name: url

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 11453688452

num_examples: 28602

- name: validation

num_bytes: 17402295

num_examples: 50

- name: test

num_bytes: 40482852

num_examples: 100

download_size: 11740397875

dataset_size: 11511573599

---

# Dataset Card for "pg19"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://github.com/deepmind/pg19](https://github.com/deepmind/pg19)

- **Repository:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Paper:** [Compressive Transformers for Long-Range Sequence Modelling](https://arxiv.org/abs/1911.05507)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Size of downloaded dataset files:** 11.74 GB

- **Size of the generated dataset:** 11.51 GB

- **Total amount of disk used:** 23.25 GB

### Dataset Summary

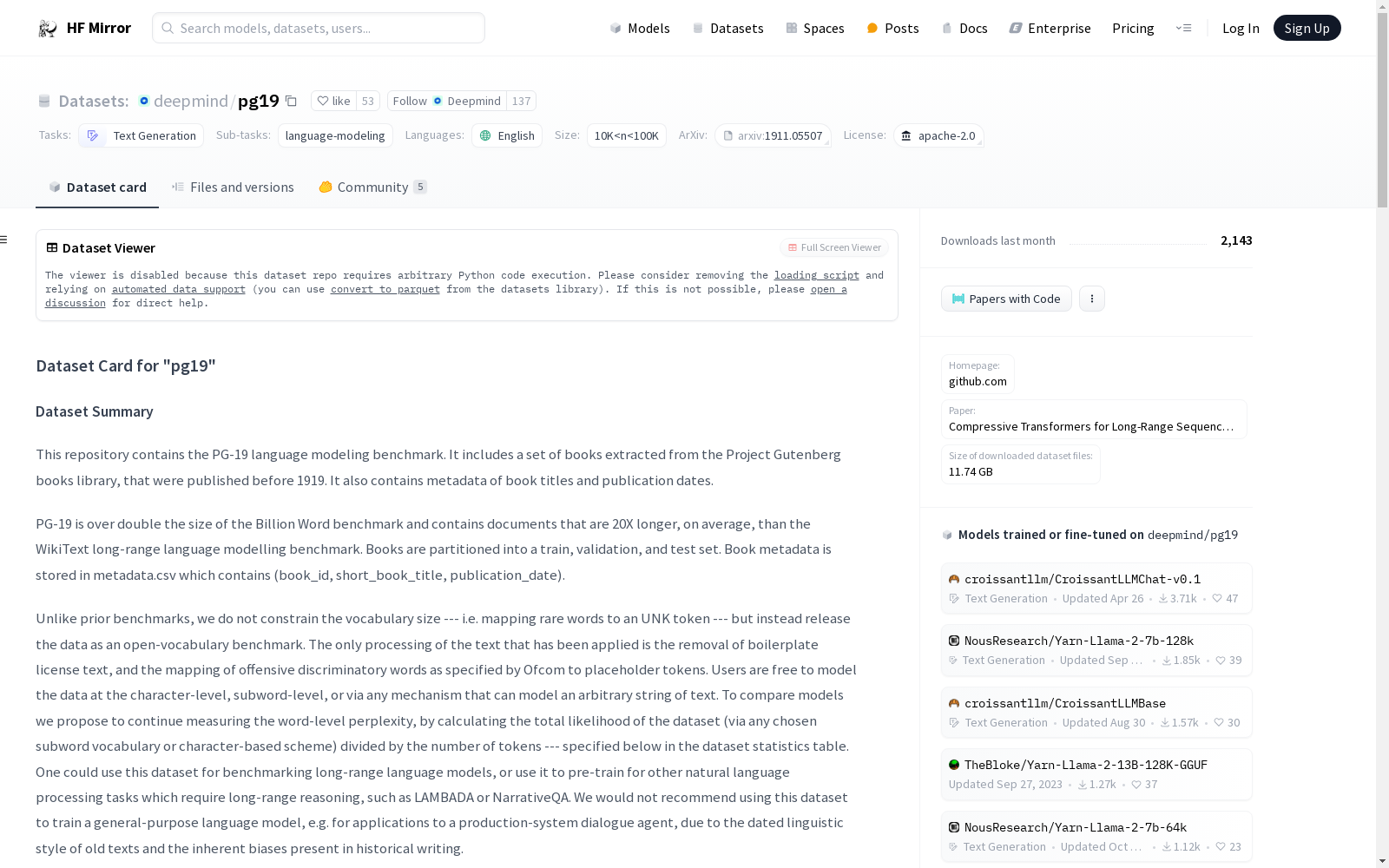

This repository contains the PG-19 language modeling benchmark.

It includes a set of books extracted from the Project Gutenberg books library, that were published before 1919.

It also contains metadata of book titles and publication dates.

PG-19 is over double the size of the Billion Word benchmark and contains documents that are 20X longer, on average, than the WikiText long-range language modelling benchmark.

Books are partitioned into a train, validation, and test set. Book metadata is stored in metadata.csv which contains (book_id, short_book_title, publication_date).

Unlike prior benchmarks, we do not constrain the vocabulary size --- i.e. mapping rare words to an UNK token --- but instead release the data as an open-vocabulary benchmark. The only processing of the text that has been applied is the removal of boilerplate license text, and the mapping of offensive discriminatory words as specified by Ofcom to placeholder tokens. Users are free to model the data at the character-level, subword-level, or via any mechanism that can model an arbitrary string of text.

To compare models we propose to continue measuring the word-level perplexity, by calculating the total likelihood of the dataset (via any chosen subword vocabulary or character-based scheme) divided by the number of tokens --- specified below in the dataset statistics table.

One could use this dataset for benchmarking long-range language models, or use it to pre-train for other natural language processing tasks which require long-range reasoning, such as LAMBADA or NarrativeQA. We would not recommend using this dataset to train a general-purpose language model, e.g. for applications to a production-system dialogue agent, due to the dated linguistic style of old texts and the inherent biases present in historical writing.

### Supported Tasks and Leaderboards

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Languages

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Dataset Structure

### Data Instances

#### default

- **Size of downloaded dataset files:** 11.74 GB

- **Size of the generated dataset:** 11.51 GB

- **Total amount of disk used:** 23.25 GB

An example of 'train' looks as follows.

```

This example was too long and was cropped:

{

"publication_date": 1907,

"short_book_title": "La Fiammetta by Giovanni Boccaccio",

"text": "\"\\n\\n\\n\\nProduced by Ted Garvin, Dave Morgan and PG Distributed Proofreaders\\n\\n\\n\\n\\nLA FIAMMETTA\\n\\nBY\\n\\nGIOVANNI BOCCACCIO\\n...",

"url": "http://www.gutenberg.org/ebooks/10006"

}

```

### Data Fields

The data fields are the same among all splits.

#### default

- `short_book_title`: a `string` feature.

- `publication_date`: a `int32` feature.

- `url`: a `string` feature.

- `text`: a `string` feature.

### Data Splits

| name |train|validation|test|

|-------|----:|---------:|---:|

|default|28602| 50| 100|

## Dataset Creation

### Curation Rationale

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the source language producers?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Annotations

#### Annotation process

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the annotators?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Personal and Sensitive Information

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Discussion of Biases

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Other Known Limitations

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Additional Information

### Dataset Curators

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Licensing Information

The dataset is licensed under [Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0.html).

### Citation Information

```

@article{raecompressive2019,

author = {Rae, Jack W and Potapenko, Anna and Jayakumar, Siddhant M and

Hillier, Chloe and Lillicrap, Timothy P},

title = {Compressive Transformers for Long-Range Sequence Modelling},

journal = {arXiv preprint},

url = {https://arxiv.org/abs/1911.05507},

year = {2019},

}

```

### Contributions

Thanks to [@thomwolf](https://github.com/thomwolf), [@lewtun](https://github.com/lewtun), [@lucidrains](https://github.com/lucidrains), [@lhoestq](https://github.com/lhoestq) for adding this dataset.

annotations_creators:

- 专家生成

language_creators:

- 专家生成

language:

- 英语(en)

license:

- Apache-2.0许可证

multilinguality:

- 单语言

size_categories:

- 10000 < 样本数 < 100000

source_datasets:

- 原生数据集

task_categories:

- 文本生成

task_ids:

- 语言建模

paperswithcode_id: pg-19

pretty_name: PG-19

dataset_info:

features:

- name: 短图书标题

dtype: 字符串

- name: 出版日期

dtype: 32位整数

- name: URL

dtype: 字符串

- name: 文本内容

dtype: 字符串

splits:

- name: 训练集

num_bytes: 11453688452

num_examples: 28602

- name: 验证集

num_bytes: 17402295

num_examples: 50

- name: 测试集

num_bytes: 40482852

num_examples: 100

download_size: 11740397875

dataset_size: 11511573599

---

# "PG-19"数据集卡片

## 目录

- [数据集描述](#dataset-description)

- [数据集概述](#dataset-summary)

- [支持任务与排行榜](#supported-tasks-and-leaderboards)

- [语言](#languages)

- [数据集结构](#dataset-structure)

- [数据样例](#data-instances)

- [数据字段](#data-fields)

- [数据划分](#data-splits)

- [数据集构建](#dataset-creation)

- [数据整理动机](#curation-rationale)

- [源数据](#source-data)

- [标注](#annotations)

- [个人与敏感信息](#personal-and-sensitive-information)

- [数据集使用注意事项](#considerations-for-using-the-data)

- [数据集的社会影响](#social-impact-of-dataset)

- [偏差讨论](#discussion-of-biases)

- [其他已知局限](#other-known-limitations)

- [附加信息](#additional-information)

- [数据集维护者](#dataset-curators)

- [许可信息](#licensing-information)

- [引用信息](#citation-information)

- [贡献者](#contributions)

## 数据集描述

- **主页**:[https://github.com/deepmind/pg19](https://github.com/deepmind/pg19)

- **仓库**:[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **论文**:[面向长序列建模的压缩Transformer(Compressive Transformers for Long-Range Sequence Modelling)](https://arxiv.org/abs/1911.05507)

- **联络人**:[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **下载数据集文件大小**:11.74 GB

- **生成后数据集大小**:11.51 GB

- **总磁盘占用**:23.25 GB

### 数据集概述

本仓库包含PG-19语言建模基准测试集。该数据集收录了从古腾堡项目(Project Gutenberg)图书库中提取的1919年之前出版的书籍,同时附带书籍标题元数据与出版日期信息。

PG-19的规模是Billion Word基准测试集的两倍以上,且其文档平均长度是WikiText长程语言建模基准测试集的20倍。书籍被划分为训练集、验证集与测试集。书籍元数据存储于metadata.csv文件中,包含(图书ID、短图书标题、出版日期)字段。

与此前的基准测试集不同,本数据集未对词汇表规模进行限制——即未将稀有词映射至UNK(未知词)标记,而是以开放词汇表的形式发布。仅对文本进行了两项处理:移除冗余的许可协议文本,以及将英国通信办公室(Ofcom)指定的冒犯性歧视性词汇替换为占位符标记。使用者可选择以字符级、子词级或任意可建模任意文本字符串的方式对该数据集进行建模。

为便于模型对比,我们建议沿用词级困惑度(perplexity)的评估方式:通过任意选定的子词词汇表或基于字符的方案计算数据集的总似然,再除以Token(Token)总数——数据集统计详情见下文表格。

本数据集可用于长程大语言模型(Large Language Model, LLM)的基准测试,或用于预训练需要长程推理能力的其他自然语言处理任务,例如LAMBADA或NarrativeQA。由于旧文本的语言风格较为过时,且历史文本中存在固有偏见,我们不建议使用本数据集训练通用型语言模型,例如用于生产级对话AI智能体(AI Agent)的模型。

### 支持任务与排行榜

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 语言

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## 数据集结构

### 数据样例

#### 默认配置

- **下载数据集文件大小**:11.74 GB

- **生成后数据集大小**:11.51 GB

- **总磁盘占用**:23.25 GB

训练集的样例如下所示:

该样例过长已被截断:

{

"publication_date": 1907,

"short_book_title": "La Fiammetta by Giovanni Boccaccio",

"text": ""\n\n\n\nProduced by Ted Garvin, Dave Morgan and PG Distributed Proofreaders\n\n\n\nLA FIAMMETTA\n\nBY\n\nGIOVANNI BOCCACCIO\n...",

"url": "http://www.gutenberg.org/ebooks/10006"

}

### 数据字段

所有数据划分均使用相同的数据字段。

#### 默认配置

- `short_book_title`:字符串类型特征,即短图书标题

- `publication_date`:32位整数类型特征,即出版日期

- `url`:字符串类型特征,即书籍URL

- `text`:字符串类型特征,即书籍文本内容

### 数据划分

| 划分名称 | 训练集样本数 | 验证集样本数 | 测试集样本数 |

|-------|----:|---------:|---:|

|默认配置|28602| 50| 100|

## 数据集构建

### 数据整理动机

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 源数据

#### 初始数据收集与标准化

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### 源语言创作者是谁?

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 标注

#### 标注流程

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### 标注者是谁?

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 个人与敏感信息

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## 数据集使用注意事项

### 数据集的社会影响

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 偏差讨论

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 其他已知局限

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## 附加信息

### 数据集维护者

[更多信息待补充](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 许可信息

本数据集采用[Apache许可证,版本2.0](https://www.apache.org/licenses/LICENSE-2.0.html)进行授权。

### 引用信息

@article{raecompressive2019,

author = {Rae, Jack W and Potapenko, Anna and Jayakumar, Siddhant M and

Hillier, Chloe and Lillicrap, Timothy P},

title = {Compressive Transformers for Long-Range Sequence Modelling},

journal = {arXiv preprint},

url = {https://arxiv.org/abs/1911.05507},

year = {2019},

}

### 贡献者

感谢[@thomwolf](https://github.com/thomwolf)、[@lewtun](https://github.com/lewtun)、[@lucidrains](https://github.com/lucidrains)、[@lhoestq](https://github.com/lhoestq)贡献本数据集。

提供机构:

deepmind

原始信息汇总

数据集概述

数据集名称: PG-19

数据集描述: PG-19是一个语言建模基准数据集,包含从Project Gutenberg图书库中提取的出版于1919年之前的书籍。数据集包含书籍标题和出版日期等元数据。PG-19的规模是Billion Word基准的两倍以上,平均文档长度是WikiText长距离语言建模基准的20倍。

数据集特点:

- 语言: 英语(en)

- 许可证: Apache-2.0

- 多语言性: 单语(monolingual)

- 大小分类: 10K<n<100K

- 源数据集: 原始(original)

- 任务类别: 文本生成(text-generation)

- 任务ID: 语言建模(language-modeling)

- 数据集信息:

- 特征:

short_book_title: 字符串类型publication_date: 整数类型(int32)url: 字符串类型text: 字符串类型

- 数据分割:

- 训练集(train):28602个样本,11453688452字节

- 验证集(validation):50个样本,17402295字节

- 测试集(test):100个样本,40482852字节

- 下载大小: 11.74 GB

- 数据集大小: 11.51 GB

- 特征:

数据集用途: 该数据集适用于基准测试长距离语言模型,或用于预训练其他需要长距离推理的自然语言处理任务,如LAMBADA或NarrativeQA。不推荐用于训练通用目的的语言模型,如生产系统对话代理,由于旧文本的语言风格和历史写作中固有的偏见。

许可证信息: 数据集根据Apache License, Version 2.0授权。

引用信息:

@article{raecompressive2019, author = {Rae, Jack W and Potapenko, Anna and Jayakumar, Siddhant M and Hillier, Chloe and Lillicrap, Timothy P}, title = {Compressive Transformers for Long-Range Sequence Modelling}, journal = {arXiv preprint}, url = {https://arxiv.org/abs/1911.05507}, year = {2019}, }

搜集汇总

数据集介绍

构建方式

PG-19数据集的构建基于Project Gutenberg图书馆中出版于1919年之前的书籍,这些书籍经过精心挑选并去除了版权声明等冗余内容。数据集的构建过程中,保留了书籍的原始文本,同时添加了书籍的简短标题、出版日期和URL等元数据。为了确保数据集的多样性和挑战性,PG-19的规模是Billion Word基准的两倍,且文档的平均长度是WikiText基准的20倍。数据集被划分为训练集、验证集和测试集,以支持不同阶段的模型训练和评估。

特点

PG-19数据集的主要特点在于其庞大的规模和长文本特性,使其成为评估和训练长程语言模型的理想选择。数据集采用开放词汇表,未对罕见词汇进行特殊处理,允许用户在字符级、子词级或任意文本建模机制上进行操作。此外,PG-19的文本风格反映了历史时期的语言特征,尽管这可能带来一定的偏见,但也为研究历史语言风格和长程推理提供了独特的资源。

使用方法

PG-19数据集适用于多种自然语言处理任务,特别是那些需要长程推理的任务,如LAMBADA和NarrativeQA。用户可以通过HuggingFace的datasets库轻松加载和使用该数据集,利用其训练集进行模型预训练,验证集进行模型调优,测试集进行最终评估。值得注意的是,由于数据集中的文本具有历史时期的语言风格,因此不建议将其用于训练通用语言模型,尤其是面向现代对话系统的应用。

背景与挑战

背景概述

PG-19数据集由DeepMind于2019年发布,旨在为长序列语言建模提供一个具有挑战性的基准。该数据集从Project Gutenberg项目中提取了1919年之前出版的书籍,涵盖了丰富的历史文本资源。PG-19不仅在规模上超越了传统的Billion Word基准,且其文档平均长度是WikiText的20倍,为研究者提供了一个开放词汇的语言建模平台。该数据集的核心研究问题是如何有效处理和建模长距离依赖关系,这对于自然语言处理任务如LAMBADA和NarrativeQA具有重要意义。PG-19的发布为长序列语言模型的研究提供了新的视角和资源,推动了该领域的技术进步。

当前挑战

PG-19数据集在构建和应用过程中面临多项挑战。首先,处理长文本序列的复杂性要求模型具备高效的长距离依赖建模能力,这对现有模型的架构和计算资源提出了严峻考验。其次,历史文本的语言风格和词汇使用与现代语言存在显著差异,可能导致模型在泛化能力上的局限性。此外,数据集的开放词汇特性虽然增加了灵活性,但也带来了词汇稀疏性和歧义处理的问题。最后,历史文本中潜在的偏见和敏感信息需要谨慎处理,以避免在模型训练中引入不必要的社会影响。

常用场景

经典使用场景

PG-19数据集的经典使用场景主要集中在长序列语言模型的训练与评估。由于其包含的文本长度远超传统基准数据集,如WikiText,PG-19特别适用于需要长距离依赖建模的任务,如叙事理解(NarrativeQA)和长文本生成(LAMBADA)。通过利用PG-19,研究者能够开发和验证能够处理复杂长文本的模型,从而推动语言模型在长距离依赖任务中的表现。

解决学术问题

PG-19数据集解决了传统语言模型在处理长文本时面临的挑战,特别是长距离依赖问题。通过提供大量长篇文本,PG-19为研究者提供了一个理想的平台,用于开发和测试能够有效捕捉长距离上下文的模型。这不仅提升了语言模型在长文本生成和理解任务中的性能,还为相关领域的研究提供了新的基准,推动了长序列建模技术的发展。

衍生相关工作

PG-19数据集的发布激发了大量关于长序列语言模型的研究。例如,基于PG-19的训练,研究者提出了压缩变压器(Compressive Transformers),这是一种能够有效处理长距离依赖的模型架构。此外,PG-19还推动了其他长文本处理任务的研究,如叙事理解和长文本生成,进一步扩展了其在自然语言处理领域的应用范围。

以上内容由遇见数据集搜集并总结生成