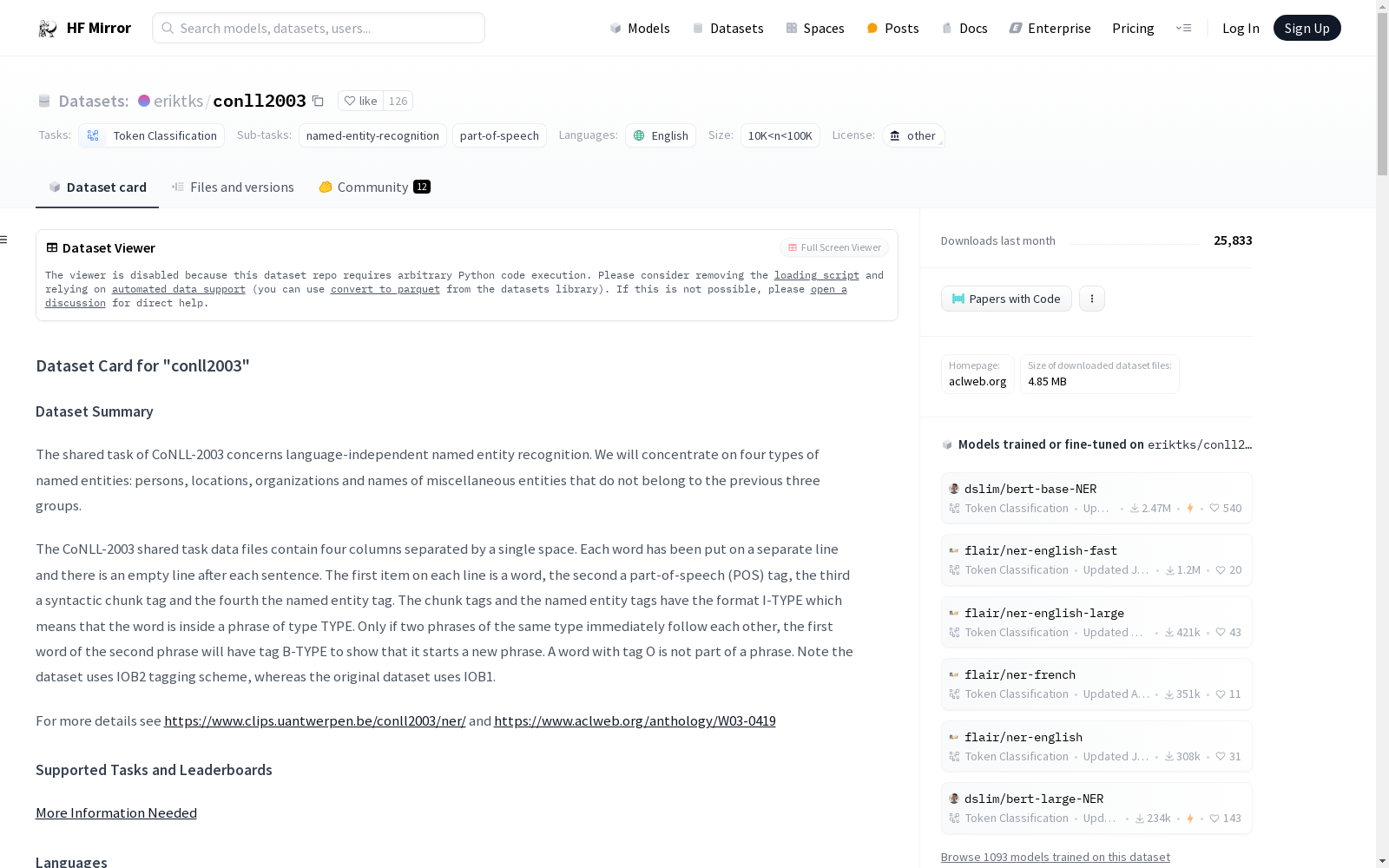

eriktks/conll2003

收藏Hugging Face2024-01-18 更新2024-05-25 收录

下载链接:

https://hf-mirror.com/datasets/eriktks/conll2003

下载链接

链接失效反馈资源简介:

---

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- en

license:

- other

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|other-reuters-corpus

task_categories:

- token-classification

task_ids:

- named-entity-recognition

- part-of-speech

paperswithcode_id: conll-2003

pretty_name: CoNLL-2003

dataset_info:

features:

- name: id

dtype: string

- name: tokens

sequence: string

- name: pos_tags

sequence:

class_label:

names:

'0': '"'

'1': ''''''

'2': '#'

'3': $

'4': (

'5': )

'6': ','

'7': .

'8': ':'

'9': '``'

'10': CC

'11': CD

'12': DT

'13': EX

'14': FW

'15': IN

'16': JJ

'17': JJR

'18': JJS

'19': LS

'20': MD

'21': NN

'22': NNP

'23': NNPS

'24': NNS

'25': NN|SYM

'26': PDT

'27': POS

'28': PRP

'29': PRP$

'30': RB

'31': RBR

'32': RBS

'33': RP

'34': SYM

'35': TO

'36': UH

'37': VB

'38': VBD

'39': VBG

'40': VBN

'41': VBP

'42': VBZ

'43': WDT

'44': WP

'45': WP$

'46': WRB

- name: chunk_tags

sequence:

class_label:

names:

'0': O

'1': B-ADJP

'2': I-ADJP

'3': B-ADVP

'4': I-ADVP

'5': B-CONJP

'6': I-CONJP

'7': B-INTJ

'8': I-INTJ

'9': B-LST

'10': I-LST

'11': B-NP

'12': I-NP

'13': B-PP

'14': I-PP

'15': B-PRT

'16': I-PRT

'17': B-SBAR

'18': I-SBAR

'19': B-UCP

'20': I-UCP

'21': B-VP

'22': I-VP

- name: ner_tags

sequence:

class_label:

names:

'0': O

'1': B-PER

'2': I-PER

'3': B-ORG

'4': I-ORG

'5': B-LOC

'6': I-LOC

'7': B-MISC

'8': I-MISC

config_name: conll2003

splits:

- name: train

num_bytes: 6931345

num_examples: 14041

- name: validation

num_bytes: 1739223

num_examples: 3250

- name: test

num_bytes: 1582054

num_examples: 3453

download_size: 982975

dataset_size: 10252622

train-eval-index:

- config: conll2003

task: token-classification

task_id: entity_extraction

splits:

train_split: train

eval_split: test

col_mapping:

tokens: tokens

ner_tags: tags

metrics:

- type: seqeval

name: seqeval

---

# Dataset Card for "conll2003"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://www.aclweb.org/anthology/W03-0419/](https://www.aclweb.org/anthology/W03-0419/)

- **Repository:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Paper:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Size of downloaded dataset files:** 4.85 MB

- **Size of the generated dataset:** 10.26 MB

- **Total amount of disk used:** 15.11 MB

### Dataset Summary

The shared task of CoNLL-2003 concerns language-independent named entity recognition. We will concentrate on

four types of named entities: persons, locations, organizations and names of miscellaneous entities that do

not belong to the previous three groups.

The CoNLL-2003 shared task data files contain four columns separated by a single space. Each word has been put on

a separate line and there is an empty line after each sentence. The first item on each line is a word, the second

a part-of-speech (POS) tag, the third a syntactic chunk tag and the fourth the named entity tag. The chunk tags

and the named entity tags have the format I-TYPE which means that the word is inside a phrase of type TYPE. Only

if two phrases of the same type immediately follow each other, the first word of the second phrase will have tag

B-TYPE to show that it starts a new phrase. A word with tag O is not part of a phrase. Note the dataset uses IOB2

tagging scheme, whereas the original dataset uses IOB1.

For more details see https://www.clips.uantwerpen.be/conll2003/ner/ and https://www.aclweb.org/anthology/W03-0419

### Supported Tasks and Leaderboards

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Languages

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Dataset Structure

### Data Instances

#### conll2003

- **Size of downloaded dataset files:** 4.85 MB

- **Size of the generated dataset:** 10.26 MB

- **Total amount of disk used:** 15.11 MB

An example of 'train' looks as follows.

```

{

"chunk_tags": [11, 12, 12, 21, 13, 11, 11, 21, 13, 11, 12, 13, 11, 21, 22, 11, 12, 17, 11, 21, 17, 11, 12, 12, 21, 22, 22, 13, 11, 0],

"id": "0",

"ner_tags": [0, 3, 4, 0, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0],

"pos_tags": [12, 22, 22, 38, 15, 22, 28, 38, 15, 16, 21, 35, 24, 35, 37, 16, 21, 15, 24, 41, 15, 16, 21, 21, 20, 37, 40, 35, 21, 7],

"tokens": ["The", "European", "Commission", "said", "on", "Thursday", "it", "disagreed", "with", "German", "advice", "to", "consumers", "to", "shun", "British", "lamb", "until", "scientists", "determine", "whether", "mad", "cow", "disease", "can", "be", "transmitted", "to", "sheep", "."]

}

```

The original data files have `-DOCSTART-` lines used to separate documents, but these lines are removed here.

Indeed `-DOCSTART-` is a special line that acts as a boundary between two different documents, and it is filtered out in this implementation.

### Data Fields

The data fields are the same among all splits.

#### conll2003

- `id`: a `string` feature.

- `tokens`: a `list` of `string` features.

- `pos_tags`: a `list` of classification labels (`int`). Full tagset with indices:

```python

{'"': 0, "''": 1, '#': 2, '$': 3, '(': 4, ')': 5, ',': 6, '.': 7, ':': 8, '``': 9, 'CC': 10, 'CD': 11, 'DT': 12,

'EX': 13, 'FW': 14, 'IN': 15, 'JJ': 16, 'JJR': 17, 'JJS': 18, 'LS': 19, 'MD': 20, 'NN': 21, 'NNP': 22, 'NNPS': 23,

'NNS': 24, 'NN|SYM': 25, 'PDT': 26, 'POS': 27, 'PRP': 28, 'PRP$': 29, 'RB': 30, 'RBR': 31, 'RBS': 32, 'RP': 33,

'SYM': 34, 'TO': 35, 'UH': 36, 'VB': 37, 'VBD': 38, 'VBG': 39, 'VBN': 40, 'VBP': 41, 'VBZ': 42, 'WDT': 43,

'WP': 44, 'WP$': 45, 'WRB': 46}

```

- `chunk_tags`: a `list` of classification labels (`int`). Full tagset with indices:

```python

{'O': 0, 'B-ADJP': 1, 'I-ADJP': 2, 'B-ADVP': 3, 'I-ADVP': 4, 'B-CONJP': 5, 'I-CONJP': 6, 'B-INTJ': 7, 'I-INTJ': 8,

'B-LST': 9, 'I-LST': 10, 'B-NP': 11, 'I-NP': 12, 'B-PP': 13, 'I-PP': 14, 'B-PRT': 15, 'I-PRT': 16, 'B-SBAR': 17,

'I-SBAR': 18, 'B-UCP': 19, 'I-UCP': 20, 'B-VP': 21, 'I-VP': 22}

```

- `ner_tags`: a `list` of classification labels (`int`). Full tagset with indices:

```python

{'O': 0, 'B-PER': 1, 'I-PER': 2, 'B-ORG': 3, 'I-ORG': 4, 'B-LOC': 5, 'I-LOC': 6, 'B-MISC': 7, 'I-MISC': 8}

```

### Data Splits

| name |train|validation|test|

|---------|----:|---------:|---:|

|conll2003|14041| 3250|3453|

## Dataset Creation

### Curation Rationale

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the source language producers?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Annotations

#### Annotation process

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### Who are the annotators?

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Personal and Sensitive Information

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Discussion of Biases

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Other Known Limitations

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## Additional Information

### Dataset Curators

[More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Licensing Information

From the [CoNLL2003 shared task](https://www.clips.uantwerpen.be/conll2003/ner/) page:

> The English data is a collection of news wire articles from the Reuters Corpus. The annotation has been done by people of the University of Antwerp. Because of copyright reasons we only make available the annotations. In order to build the complete data sets you will need access to the Reuters Corpus. It can be obtained for research purposes without any charge from NIST.

The copyrights are defined below, from the [Reuters Corpus page](https://trec.nist.gov/data/reuters/reuters.html):

> The stories in the Reuters Corpus are under the copyright of Reuters Ltd and/or Thomson Reuters, and their use is governed by the following agreements:

>

> [Organizational agreement](https://trec.nist.gov/data/reuters/org_appl_reuters_v4.html)

>

> This agreement must be signed by the person responsible for the data at your organization, and sent to NIST.

>

> [Individual agreement](https://trec.nist.gov/data/reuters/ind_appl_reuters_v4.html)

>

> This agreement must be signed by all researchers using the Reuters Corpus at your organization, and kept on file at your organization.

### Citation Information

```

@inproceedings{tjong-kim-sang-de-meulder-2003-introduction,

title = "Introduction to the {C}o{NLL}-2003 Shared Task: Language-Independent Named Entity Recognition",

author = "Tjong Kim Sang, Erik F. and

De Meulder, Fien",

booktitle = "Proceedings of the Seventh Conference on Natural Language Learning at {HLT}-{NAACL} 2003",

year = "2003",

url = "https://www.aclweb.org/anthology/W03-0419",

pages = "142--147",

}

```

### Contributions

Thanks to [@jplu](https://github.com/jplu), [@vblagoje](https://github.com/vblagoje), [@lhoestq](https://github.com/lhoestq) for adding this dataset.

标注创作者:

- 众包

语言采集方式:

- 现成采集

语言:

- 英语(en)

许可证:

- 其他许可

多语言属性:

- 单语言

样本量区间:

- 10000 < 样本量 < 100000

源数据集:

- 扩展|其他-路透社语料库

任务类别:

- 词元(Token)分类

任务子项:

- 命名实体识别(Named Entity Recognition, NER)

- 词性标注(Part-of-Speech, POS)

PaperWithCode编号: conll-2003

展示名称: CoNLL-2003

## 「conll2003」数据集卡片

## 目录

- [数据集描述](#数据集描述)

- [数据集摘要](#数据集摘要)

- [支持的任务与排行榜](#支持的任务与排行榜)

- [语言](#语言)

- [数据集结构](#数据集结构)

- [数据实例](#数据实例)

- [数据字段](#数据字段)

- [数据拆分](#数据拆分)

- [数据集创建](#数据集创建)

- [策展依据](#策展依据)

- [源数据](#源数据)

- [标注](#标注)

- [个人与敏感信息](#个人与敏感信息)

- [数据使用注意事项](#数据使用注意事项)

- [数据集的社会影响](#数据集的社会影响)

- [偏差讨论](#偏差讨论)

- [其他已知限制](#其他已知限制)

- [附加信息](#附加信息)

- [数据集策展人](#数据集策展人)

- [许可信息](#许可信息)

- [引用信息](#引用信息)

- [贡献](#贡献)

## 数据集描述

- **主页:** [https://www.aclweb.org/anthology/W03-0419/](https://www.aclweb.org/anthology/W03-0419/)

- **仓库:** [需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **论文:** [需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **联系人:** [需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **下载数据集文件大小:** 4.85 MB

- **生成数据集大小:** 10.26 MB

- **总磁盘占用:** 15.11 MB

### 数据集摘要

CoNLL-2003共享任务聚焦于与语言无关的命名实体识别(Named Entity Recognition, NER)任务,本次数据集涵盖四类命名实体:人物、地点、组织机构,以及不属于前三类的其他杂类实体。

CoNLL-2003共享任务的数据文件包含四列,以单个空格分隔。每个词单独占一行,每句结束后留有一空行。每行的第一项为词元,第二项为词性(Part-of-Speech, POS)标签,第三项为句法块标签,第四项为命名实体标签。句法块标签与命名实体标签采用`I-TYPE`格式,代表该词属于TYPE类型的短语内部。仅当两个同类型短语连续相邻时,第二个短语的首个词元将被标注为`B-TYPE`,以标识其为新短语的起始。标注为`O`的词元不属于任何短语。需注意,本数据集采用IOB2标注方案,而原始数据集使用的是IOB1方案。

如需了解更多细节,请访问 https://www.clips.uantwerpen.be/conll2003/ner/ 与 https://www.aclweb.org/anthology/W03-0419

### 支持的任务与排行榜

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 语言

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## 数据集结构

### 数据实例

#### conll2003

- **下载数据集文件大小:** 4.85 MB

- **生成数据集大小:** 10.26 MB

- **总磁盘占用:** 15.11 MB

训练集的一个样本示例如下:

{

"chunk_tags": [11, 12, 12, 21, 13, 11, 11, 21, 13, 11, 12, 13, 11, 21, 22, 11, 12, 17, 11, 21, 17, 11, 12, 12, 21, 22, 22, 13, 11, 0],

"id": "0",

"ner_tags": [0, 3, 4, 0, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0],

"pos_tags": [12, 22, 22, 38, 15, 22, 28, 38, 15, 16, 21, 35, 24, 35, 37, 16, 21, 15, 24, 41, 15, 16, 21, 21, 20, 37, 40, 35, 21, 7],

"tokens": ["The", "European", "Commission", "said", "on", "Thursday", "it", "disagreed", "with", "German", "advice", "to", "consumers", "to", "shun", "British", "lamb", "until", "scientists", "determine", "whether", "mad", "cow", "disease", "can", "be", "transmitted", "to", "sheep", "."]

}

原始数据文件中使用`-DOCSTART-`行来分隔不同文档,但本实现中已移除该类行。事实上,`-DOCSTART-`是用于分隔两个不同文档的特殊行,在本数据集实现中已被过滤掉。

### 数据字段

所有拆分的数据字段均保持一致。

#### conll2003

- `id`: 字符串类型特征。

- `tokens`: 字符串类型列表特征,即词元序列。

- `pos_tags`: 整数分类标签列表,即词性标注标签。完整标签集与索引对应关系如下:

python

{"'": 0, "''": 1, '#': 2, '$': 3, '(': 4, ')': 5, ',': 6, '.': 7, ':': 8, '``': 9, 'CC': 10, 'CD': 11, 'DT': 12,

'EX': 13, 'FW': 14, 'IN': 15, 'JJ': 16, 'JJR': 17, 'JJS': 18, 'LS': 19, 'MD': 20, 'NN': 21, 'NNP': 22, 'NNPS': 23,

'NNS': 24, 'NN|SYM': 25, 'PDT': 26, 'POS': 27, 'PRP': 28, 'PRP$': 29, 'RB': 30, 'RBR': 31, 'RBS': 32, 'RP': 33,

'SYM': 34, 'TO': 35, 'UH': 36, 'VB': 37, 'VBD': 38, 'VBG': 39, 'VBN': 40, 'VBP': 41, 'VBZ': 42, 'WDT': 43,

'WP': 44, 'WP$': 45, 'WRB': 46}

- `chunk_tags`: 整数分类标签列表,即句法块标签。完整标签集与索引对应关系如下:

python

{'O': 0, 'B-ADJP': 1, 'I-ADJP': 2, 'B-ADVP': 3, 'I-ADVP': 4, 'B-CONJP': 5, 'I-CONJP': 6, 'B-INTJ': 7, 'I-INTJ': 8,

'B-LST': 9, 'I-LST': 10, 'B-NP': 11, 'I-NP': 12, 'B-PP': 13, 'I-PP': 14, 'B-PRT': 15, 'I-PRT': 16, 'B-SBAR': 17,

'I-SBAR': 18, 'B-UCP': 19, 'I-UCP': 20, 'B-VP': 21, 'I-VP': 22}

- `ner_tags`: 整数分类标签列表,即命名实体标签。完整标签集与索引对应关系如下:

python

{'O': 0, 'B-PER': 1, 'I-PER': 2, 'B-ORG': 3, 'I-ORG': 4, 'B-LOC': 5, 'I-LOC': 6, 'B-MISC': 7, 'I-MISC': 8}

### 数据拆分

| 名称 | 训练集 | 验证集 | 测试集 |

|---------|-------:|--------:|------:|

| conll2003 | 14041 | 3250 | 3453 |

## 数据集创建

### 策展依据

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 源数据

#### 初始数据收集与标准化

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### 源语言生产者是谁?

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 标注

#### 标注流程

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

#### 标注者是谁?

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 个人与敏感信息

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## 数据使用注意事项

### 数据集的社会影响

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 偏差讨论

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 其他已知限制

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

## 附加信息

### 数据集策展人

[需补充更多信息](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### 许可信息

源自[CoNLL2003共享任务](https://www.clips.uantwerpen.be/conll2003/ner/)页面:

> 本数据集的英文语料源自路透社语料库的新闻专线文章,标注工作由安特卫普大学的人员完成。鉴于版权限制,本数据集仅提供标注文件,若需构建完整数据集,需获取路透社语料库的访问权限。研究人员可通过美国国家标准与技术研究院(National Institute of Standards and Technology, NIST)免费获取该语料库的研究使用权限。

版权声明如下,源自[路透社语料库页面](https://trec.nist.gov/data/reuters/reuters.html):

> 路透社语料库中的所有故事均受路透社有限公司和/或汤森路透的版权保护,其使用需遵循以下协议:

>

> [组织机构协议](https://trec.nist.gov/data/reuters/org_appl_reuters_v4.html)

>

> 本协议需由您所在机构负责数据的人员签署,并发送至NIST。

>

> [个人协议](https://trec.nist.gov/data/reuters/ind_appl_reuters_v4.html)

>

> 本协议需由您所在机构中所有使用路透社语料库的研究人员签署,并留存于您所在机构。

### 引用信息

@inproceedings{tjong-kim-sang-de-meulder-2003-introduction,

title = "Introduction to the {C}o{NLL}-2003 Shared Task: Language-Independent Named Entity Recognition",

author = "Tjong Kim Sang, Erik F. and

De Meulder, Fien",

booktitle = "Proceedings of the Seventh Conference on Natural Language Learning at {HLT}-{NAACL} 2003",

year = "2003",

url = "https://www.aclweb.org/anthology/W03-0419",

pages = "142--147",

}

### 贡献

感谢[@jplu](https://github.com/jplu)、[@vblagoje](https://github.com/vblagoje)与[@lhoestq](https://github.com/lhoestq)为本数据集的添加工作。

提供机构:

eriktks

原始信息汇总

数据集概述

基本信息

- 数据集名称: CoNLL-2003

- 语言: 英语

- 许可证: 其他

- 多语言性: 单语种

- 数据集大小: 10K<n<100K

- 源数据集: 扩展自其他-路透社语料库

- 任务类别:

- 词性标注 (Part-of-Speech)

- 命名实体识别 (Named-Entity Recognition)

数据集结构

- 特征:

id: 字符串类型tokens: 字符串序列pos_tags: 分类标签序列,包含47种词性标签chunk_tags: 分类标签序列,包含23种句法块标签ner_tags: 分类标签序列,包含9种命名实体标签

数据分割

- 训练集: 14041个样本

- 验证集: 3250个样本

- 测试集: 3453个样本

数据实例

- 示例: json { "chunk_tags": [11, 12, 12, 21, 13, 11, 11, 21, 13, 11, 12, 13, 11, 21, 22, 11, 12, 17, 11, 21, 17, 11, 12, 12, 21, 22, 22, 13, 11, 0], "id": "0", "ner_tags": [0, 3, 4, 0, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 7, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], "pos_tags": [12, 22, 22, 38, 15, 22, 28, 38, 15, 16, 21, 35, 24, 35, 37, 16, 21, 15, 24, 41, 15, 16, 21, 21, 20, 37, 40, 35, 21, 7], "tokens": ["The", "European", "Commission", "said", "on", "Thursday", "it", "disagreed", "with", "German", "advice", "to", "consumers", "to", "shun", "British", "lamb", "until", "scientists", "determine", "whether", "mad", "cow", "disease", "can", "be", "transmitted", "to", "sheep", "."] }

引用信息

@inproceedings{tjong-kim-sang-de-meulder-2003-introduction, title = "Introduction to the {C}o{NLL}-2003 Shared Task: Language-Independent Named Entity Recognition", author = "Tjong Kim Sang, Erik F. and De Meulder, Fien", booktitle = "Proceedings of the Seventh Conference on Natural Language Learning at {HLT}-{NAACL} 2003", year = "2003", url = "https://www.aclweb.org/anthology/W03-0419", pages = "142--147", }

搜集汇总

数据集介绍

构建方式

CoNLL-2003数据集的构建基于Reuters Corpus的新闻文章,通过众包方式进行标注。数据集包含四种类型的命名实体:人物、地点、组织和其他杂项。每个单词被单独标注,包括词性标签、句法块标签和命名实体标签,采用IOB2标注方案。数据集的构建旨在支持语言无关的命名实体识别任务,为研究者提供了一个标准化的基准数据集。

特点

CoNLL-2003数据集的主要特点在于其多层次的标注结构,包括词性标注、句法块标注和命名实体标注,涵盖了四种主要的命名实体类型。数据集规模适中,包含超过14,000个训练样本,适合用于训练和评估命名实体识别模型。此外,数据集的标注质量较高,适用于多种自然语言处理任务,如实体抽取和词性标注。

使用方法

CoNLL-2003数据集适用于命名实体识别(NER)和词性标注(POS)任务。使用该数据集时,研究者可以通过加载数据集的训练、验证和测试集进行模型训练和评估。数据集提供了详细的标注信息,包括每个单词的词性标签、句法块标签和命名实体标签,研究者可以根据任务需求选择相应的标注信息进行模型训练。

背景与挑战

背景概述

CoNLL-2003数据集是由Erik F. Tjong Kim Sang和Fien De Meulder于2003年创建的,旨在推动语言无关的命名实体识别(Named Entity Recognition, NER)研究。该数据集基于Reuters新闻语料库,专注于识别四类命名实体:人名、地点、组织和杂项实体。CoNLL-2003的发布极大地促进了自然语言处理领域的发展,尤其是在序列标注任务中,成为评估NER模型性能的标准基准之一。

当前挑战

CoNLL-2003数据集的主要挑战在于其复杂的标注任务,包括命名实体识别和词性标注。构建过程中,研究人员面临的主要挑战是如何在保持数据质量的同时,确保标注的一致性和准确性。此外,由于数据集基于新闻语料库,可能存在领域偏差,这为模型的泛化能力带来了额外的挑战。

常用场景

经典使用场景

CoNLL-2003数据集在自然语言处理领域中被广泛用于命名实体识别(Named Entity Recognition, NER)任务。该数据集包含了四种类型的命名实体:人名、地名、组织名和其他实体。通过提供详细的标注信息,研究者可以利用该数据集训练和评估NER模型,从而识别文本中的关键实体信息。

衍生相关工作

基于CoNLL-2003数据集,许多经典的工作得以展开。例如,研究者们提出了多种改进的NER模型,如基于条件随机场(CRF)的方法、深度学习模型(如LSTM和BERT)等。这些模型在CoNLL-2003数据集上的表现显著优于传统方法,推动了NER技术的进步。此外,该数据集还被用于多任务学习、跨语言NER等研究方向,进一步扩展了其在自然语言处理领域的影响力。

数据集最近研究

最新研究方向

近年来,CoNLL-2003数据集在自然语言处理领域的前沿研究中扮演了重要角色,尤其是在命名实体识别(NER)和词性标注(POS)任务中。随着深度学习技术的快速发展,研究者们不断探索如何利用预训练语言模型(如BERT、GPT等)提升NER的性能。此外,跨领域和跨语言的NER研究也成为热点,旨在解决不同语言和领域间的数据分布差异问题。这些研究不仅推动了NER技术的进步,还为信息抽取、知识图谱构建等应用提供了坚实的基础。

以上内容由遇见数据集搜集并总结生成