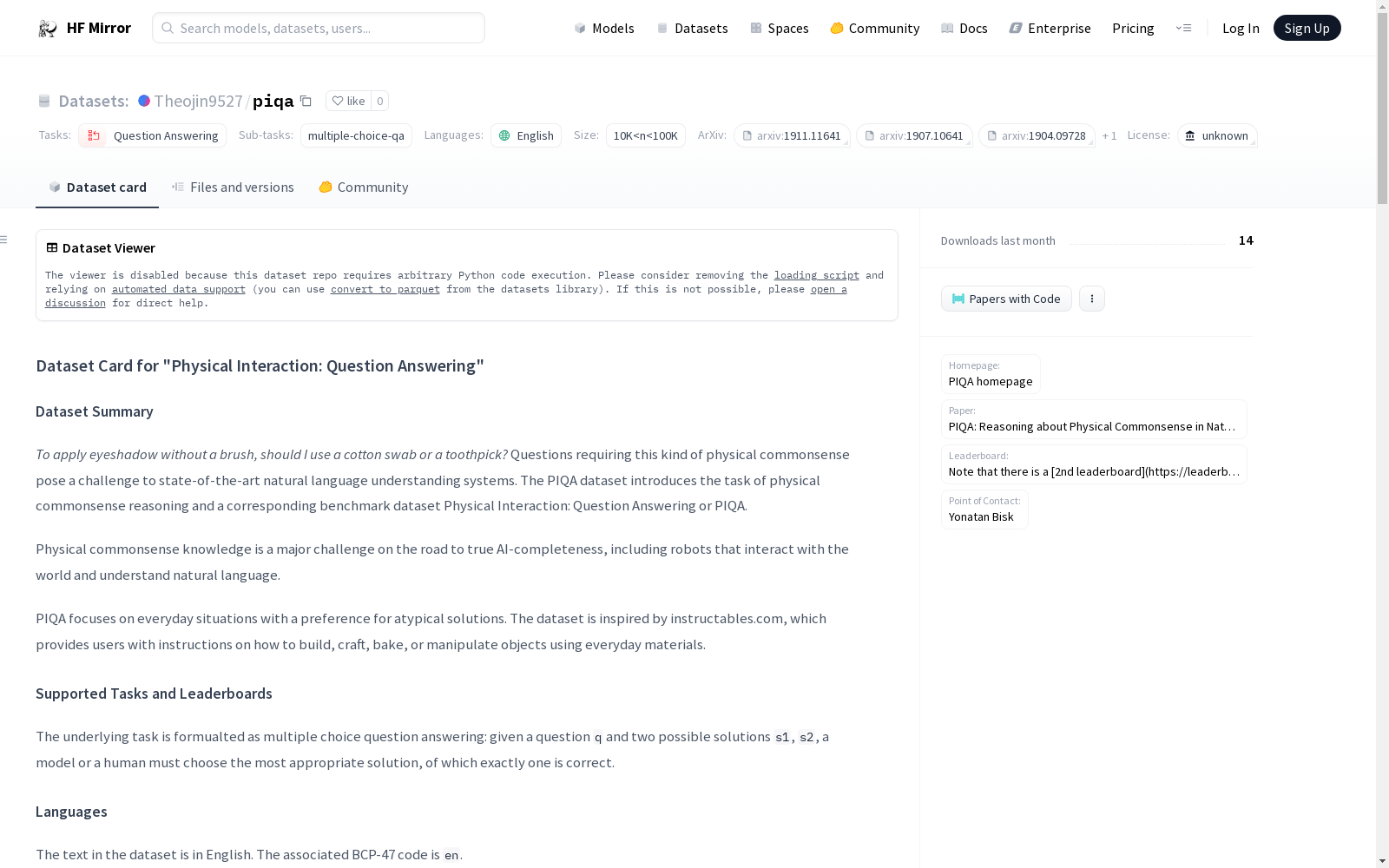

Theojin9527/piqa

收藏Hugging Face2024-03-31 更新2024-06-11 收录

下载链接:

https://hf-mirror.com/datasets/Theojin9527/piqa

下载链接

链接失效反馈资源简介:

---

annotations_creators:

- crowdsourced

language_creators:

- crowdsourced

- found

language:

- en

license:

- unknown

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- question-answering

task_ids:

- multiple-choice-qa

paperswithcode_id: piqa

pretty_name: 'Physical Interaction: Question Answering'

dataset_info:

features:

- name: goal

dtype: string

- name: sol1

dtype: string

- name: sol2

dtype: string

- name: label

dtype:

class_label:

names:

'0': '0'

'1': '1'

config_name: plain_text

splits:

- name: train

num_bytes: 4104026

num_examples: 16113

- name: test

num_bytes: 761521

num_examples: 3084

- name: validation

num_bytes: 464321

num_examples: 1838

download_size: 2638625

dataset_size: 5329868

---

# Dataset Card for "Physical Interaction: Question Answering"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [PIQA homepage](https://yonatanbisk.com/piqa/)

- **Paper:** [PIQA: Reasoning about Physical Commonsense in Natural Language](https://arxiv.org/abs/1911.11641)

- **Leaderboard:** [Official leaderboard](https://yonatanbisk.com/piqa/) *Note that there is a [2nd leaderboard](https://leaderboard.allenai.org/physicaliqa) featuring a different (blind) test set with 3,446 examples as part of the Machine Commonsense DARPA project.*

- **Point of Contact:** [Yonatan Bisk](https://yonatanbisk.com/piqa/)

### Dataset Summary

*To apply eyeshadow without a brush, should I use a cotton swab or a toothpick?*

Questions requiring this kind of physical commonsense pose a challenge to state-of-the-art

natural language understanding systems. The PIQA dataset introduces the task of physical commonsense reasoning

and a corresponding benchmark dataset Physical Interaction: Question Answering or PIQA.

Physical commonsense knowledge is a major challenge on the road to true AI-completeness,

including robots that interact with the world and understand natural language.

PIQA focuses on everyday situations with a preference for atypical solutions.

The dataset is inspired by instructables.com, which provides users with instructions on how to build, craft,

bake, or manipulate objects using everyday materials.

### Supported Tasks and Leaderboards

The underlying task is formualted as multiple choice question answering: given a question `q` and two possible solutions `s1`, `s2`, a model or a human must choose the most appropriate solution, of which exactly one is correct.

### Languages

The text in the dataset is in English. The associated BCP-47 code is `en`.

## Dataset Structure

### Data Instances

An example looks like this:

```

{

"goal": "How do I ready a guinea pig cage for it's new occupants?",

"sol1": "Provide the guinea pig with a cage full of a few inches of bedding made of ripped paper strips, you will also need to supply it with a water bottle and a food dish.",

"sol2": "Provide the guinea pig with a cage full of a few inches of bedding made of ripped jeans material, you will also need to supply it with a water bottle and a food dish.",

"label": 0,

}

```

Note that the test set contains no labels. Predictions need to be submitted to the leaderboard.

### Data Fields

List and describe the fields present in the dataset. Mention their data type, and whether they are used as input or output in any of the tasks the dataset currently supports. If the data has span indices, describe their attributes, such as whether they are at the character level or word level, whether they are contiguous or not, etc. If the datasets contains example IDs, state whether they have an inherent meaning, such as a mapping to other datasets or pointing to relationships between data points.

- `goal`: the question which requires physical commonsense to be answered correctly

- `sol1`: the first solution

- `sol2`: the second solution

- `label`: the correct solution. `0` refers to `sol1` and `1` refers to `sol2`

### Data Splits

The dataset contains 16,000 examples for training, 2,000 for development and 3,000 for testing.

## Dataset Creation

### Curation Rationale

The goal of the dataset is to construct a resource that requires concrete physical reasoning.

### Source Data

The authors provide a prompt to the annotators derived from instructables.com. The instructables website is a crowdsourced collection of instruc- tions for doing everything from cooking to car repair. In most cases, users provide images or videos detailing each step and a list of tools that will be required. Most goals are simultaneously rare and unsurprising. While an annotator is unlikely to have built a UV-Flourescent steampunk lamp or made a backpack out of duct tape, it is not surprising that someone interested in home crafting would create these, nor will the tools and materials be unfamiliar to the average person. Using these examples as the seed for their annotation, helps remind annotators about the less prototypical uses of everyday objects. Second, and equally important, is that instructions build on one another. This means that any QA pair inspired by an instructable is more likely to explicitly state assumptions about what preconditions need to be met to start the task and what postconditions define success.

Annotators were asked to glance at the instructions of an instructable and pull out or have it inspire them to construct two component tasks. They would then articulate the goal (often centered on atypical materials) and how to achieve it. In addition, annotaters were asked to provide a permutation to their own solution which makes it invalid (the negative solution), often subtly.

#### Initial Data Collection and Normalization

During validation, examples with low agreement were removed from the data.

The dataset is further cleaned to remove stylistic artifacts and trivial examples from the data, which have been shown to artificially inflate model performance on previous NLI benchmarks.using the AFLite algorithm introduced in ([Sakaguchi et al. 2020](https://arxiv.org/abs/1907.10641); [Sap et al. 2019](https://arxiv.org/abs/1904.09728)) which is an improvement on adversarial filtering ([Zellers et al, 2018](https://arxiv.org/abs/1808.05326)).

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

Annotations are by construction obtained when crowdsourcers complete the prompt.

#### Who are the annotators?

Paid crowdsourcers

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

Unknown

### Citation Information

```

@inproceedings{Bisk2020,

author = {Yonatan Bisk and Rowan Zellers and

Ronan Le Bras and Jianfeng Gao

and Yejin Choi},

title = {PIQA: Reasoning about Physical Commonsense in

Natural Language},

booktitle = {Thirty-Fourth AAAI Conference on

Artificial Intelligence},

year = {2020},

}

```

### Contributions

Thanks to [@VictorSanh](https://github.com/VictorSanh) for adding this dataset.

提供机构:

Theojin9527

原始信息汇总

数据集概述

数据集名称: Physical Interaction: Question Answering (PIQA)

数据集简介: PIQA是一个专注于物理常识推理的问答数据集,旨在评估和提升自然语言理解系统在日常物理交互场景中的表现。数据集中的问题需要模型或人类选择最合适的解决方案,其中只有一个解决方案是正确的。

数据集详细信息

语言

- 主要语言: 英语 (

en)

数据集结构

- 数据实例: 每个实例包含一个目标问题(

goal)和两个解决方案(sol1和sol2),以及一个标签(label)指示正确答案。 - 数据字段:

goal: 字符串类型,需要物理常识来正确回答的问题。sol1: 字符串类型,第一个解决方案。sol2: 字符串类型,第二个解决方案。label: 类别标签,0表示sol1是正确答案,1表示sol2是正确答案。

- 数据分割:

- 训练集:16,113个实例

- 测试集:3,084个实例

- 验证集:1,838个实例

数据集创建

- 来源数据: 数据来源于instructables.com,一个提供各种日常任务指导的网站。

- 注释过程: 通过众包方式完成,注释者根据指导网站的内容生成问题和解决方案。

- 注释者: 付费的众包工作者。

许可证信息

- 许可证: 未知

引用信息

@inproceedings{Bisk2020, author = {Yonatan Bisk and Rowan Zellers and Ronan Le Bras and Jianfeng Gao and Yejin Choi}, title = {PIQA: Reasoning about Physical Commonsense in Natural Language}, booktitle = {Thirty-Fourth AAAI Conference on Artificial Intelligence}, year = {2020}, }

AI搜集汇总

数据集介绍

构建方式

PIQA数据集的构建主要依托于众包的方式,从instructables.com等指导性网站上获取灵感,构建出包含日常物理常识推理的任务。数据集的创建者们设计了一个提示,引导标注者基于这些指导性内容,创作出两个组件任务,并针对每个任务提供一个目标和一个解决方案,以及一个使其无效的变体方案。通过这样的构建方法,数据集旨在捕捉那些不寻常但合理的日常物品使用情境。

特点

PIQA数据集的特点在于它专注于物理常识推理任务,要求模型能够理解并推理出日常生活中的物理交互。数据集包含约16000个训练样本,2000个开发样本和3000个测试样本,涵盖了多种日常情境。每个样本由一个问题(目标)、两个解决方案和一个正确答案标签组成。此外,数据集在构建过程中注重去除风格化元素和显而易见的例子,以避免模型性能的人为膨胀。

使用方法

使用PIQA数据集时,研究者可以将其分为训练集、验证集和测试集,以训练和评估模型在物理常识推理任务上的表现。数据集以JSON格式存储,可以直接加载到程序中进行处理。在模型训练过程中,研究者需要根据问题、解决方案和标签来设计模型架构和损失函数,以实现正确的答案预测。测试集的标签是隐藏的,用于在提交到排行榜时评估模型性能。

背景与挑战

背景概述

物理常识推理是自然语言理解领域的一项重要挑战,对于实现真正的人工智能完整性具有重要意义。PIQA数据集由Yonatan Bisk等研究人员于2020年创建,旨在推动物理常识推理任务的发展。该数据集汇集了16,000个训练示例,2,000个开发示例和3,000个测试示例,均来源于日常生活中的具体场景,特别是那些非典型解决方案的情况。PIQA数据集的构建理念源于instructables.com网站,这是一个用户贡献的集合,提供了从烹饪到汽车修理等各种指导。该数据集的创建,对于推动自然语言处理系统在物理交互推理方面的能力具有重要影响力。

当前挑战

PIQA数据集在构建过程中面临的挑战包括:确保数据质量,特别是在众包注释过程中,需要处理低一致性示例的剔除;数据清洗,移除可能人为提高模型性能的风格化特征和琐碎示例;以及数据偏见和局限性问题,这些问题可能会影响数据集的泛化能力。在所解决的领域问题方面,物理常识推理任务对于自然语言理解系统而言是一个难题,如何准确理解并推理出物理交互中的正确解决方案,是该数据集面临的挑战之一。

常用场景

经典使用场景

在自然语言理解领域,物理常识推理是一项极具挑战性的任务。PIQA数据集为此提供了一个专门的研究平台,其经典使用场景在于评估模型对日常物理交互情境的理解能力,如判断使用棉花棒还是牙签来涂抹眼影更为合适。通过多选问答的形式,该数据集促使模型在两个解决方案中选择最合适的一个,以此检验模型在物理常识推理方面的表现。

解决学术问题

PIQA数据集解决了学术研究中如何评估模型对物理世界常识理解的问题,对于推动自然语言处理向更深层次的理解发展具有重要意义。它不仅挑战了现有模型在物理常识推理方面的能力,还为研究提供了标准化的评测方式和大量高质量的数据实例,有助于推动相关算法和模型的进步。

衍生相关工作

PIQA数据集的发布促进了后续一系列相关研究工作的开展,如进一步探索物理常识推理的边界,开发更加精准的评估指标,以及结合其他类型的数据集进行跨模态学习。这些衍生工作不仅加深了我们对物理常识推理的理解,也拓展了其在多领域中的应用潜力。

以上内容由AI搜集并总结生成