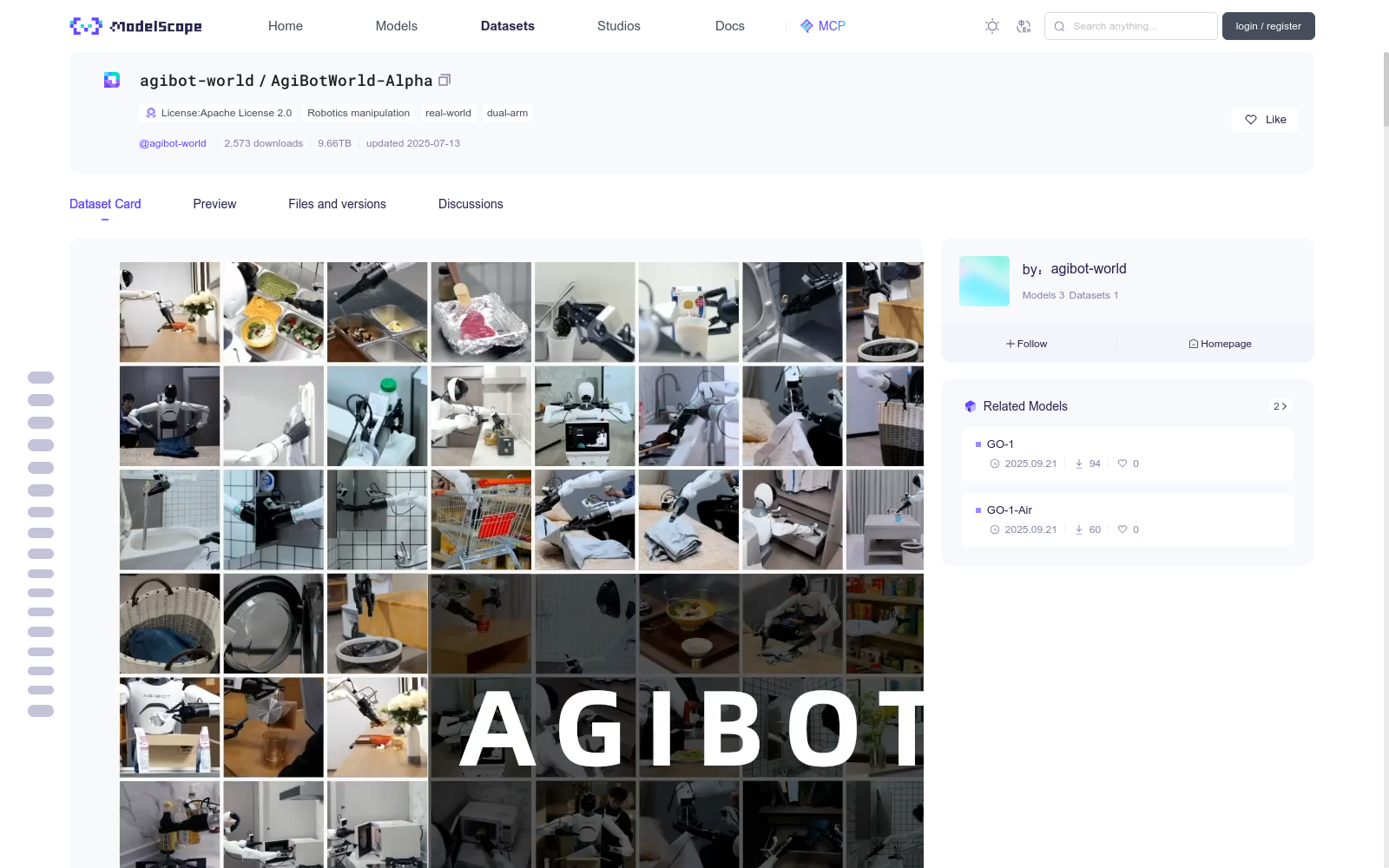

AgiBotWorld-Alpha

收藏魔搭社区2026-05-24 更新2025-01-11 收录

下载链接:

https://modelscope.cn/datasets/AI-ModelScope/AgiBotWorld-Alpha

下载链接

链接失效反馈官方服务:

资源简介:

<!-- <img src="assets/agibot_world.gif" alt="Image Alt Text" width="70%" style="display: block; margin-left: auto; margin-right: auto;" /> -->

<video controls autoplay loop muted src="https://cdn-uploads.huggingface.co/production/uploads/6763e2cfd3c85f9b6d828f6c/9HkLtnqI_Qx62dNLLsI2I.mp4"></video>

<div align="center">

<a href="https://github.com/OpenDriveLab/AgiBot-World">

<img src="https://img.shields.io/badge/GitHub-grey?logo=GitHub" alt="GitHub Badge">

</a>

<a href="https://agibot-world.com">

<img src="https://img.shields.io/badge/Project%20Page-blue?style=plastic" alt="Project Page Badge">

</a>

<a href="https://opendrivelab.com/blog/agibot-world/">

<img src="https://img.shields.io/badge/Research_Blog-black?style=flat" alt="Research Blog Badge">

</a>

<a href="https://docs.google.com/spreadsheets/d/1GWMFHYo3UJADS7kkScoJ5ObbQfAFasPuaeC7TJUr1Cc/edit?usp=sharing">

<img src="https://img.shields.io/badge/Dataset-Overview-brightgreen?logo=googleforms" alt="Research Blog Badge">

</a>

</div>

# ⚠️Important Notice !!!

Dear Users,

The Alpha Dataset has been updated as follows:

- **Frame Loss Data Removal:** Several episodes with frame loss issues have been removed. For the complete list of removed episode IDs, please refer to this [document](https://docs.google.com/spreadsheets/d/1ggKZP1KOw3geTzdu5nx8iUB9nSbJOBMVU3p4uFWDcxw/edit?gid=0#gid=0).

- **Changes in Episode Count:** The updated Alpha Dataset retains the original 36 tasks. The new version has been enriched with additional interactive objects, extending the total duration from 474.12 hours to 595.31 hours.

- **Data Anonymization and Compression:** The dataset has been anonymized to remove personal and sensitive information, ensuring data privacy. Additionally, the dataset has been compressed to enhance storage and transfer efficiency.

- **Camera Extrinsic Parameters Correction:** The accuracy of the extrinsic parameters for some data has been corrected, improving the accuracy and consistency of camera data fusion across multiple perspectives.

We recommend that you use the new version in your projects. Thank you for your support and attention.

# Key Features 🔑

- **100,000+** trajectories from 100 robots, with a total duration of 300 hours.

- **100+ real-world scenarios** across 5 target domains.

- **Cutting-edge hardware:** visual tactile sensors / 6-DoF dexterous hand / mobile dual-arm robots

- **Tasks involving:**

- Contact-rich manipulation

- Long-horizon planning

- Multi-robot collaboration

<div style="display: flex; justify-content: center; align-items: center; gap: 20px;">

<video controls autoplay loop muted width="300" style="border-radius: 10px; box-shadow: 0 4px 8px rgba(0, 0, 0, 0.1);">

<source src="https://cdn-uploads.huggingface.co/production/uploads/65111094c3b9d8d1eb71329e/RxhpvglFxkqBKp0siHEjd.mp4" type="video/mp4">

Your browser does not support the video tag.

</video>

<video controls autoplay loop muted width="300" style="border-radius: 10px; box-shadow: 0 4px 8px rgba(0, 0, 0, 0.1);">

<source src="https://cdn-uploads.huggingface.co/production/uploads/65111094c3b9d8d1eb71329e/WwTyIz3wJpIL23E6mPrVp.mp4" type="video/mp4">

Your browser does not support the video tag.

</video>

<video controls autoplay loop muted width="300" style="border-radius: 10px; box-shadow: 0 4px 8px rgba(0, 0, 0, 0.1);">

<source src="https://cdn-uploads.huggingface.co/production/uploads/65111094c3b9d8d1eb71329e/WjJ_H4YzAmdZ5ffqG06-Y.mp4" type="video/mp4">

Your browser does not support the video tag.

</video>

</div>

# News 🌍

- **`[2025/3/1]** AgiBot World Beta released.

- **`[2025/1/20]`** AgiBot World Alpha released on OpenDataLab. [Download Link](https://opendatalab.com/OpenDataLab/AgiBot-World)

- **`[2025/1/3]`** AgiBot World Alpha [**sample dataset**](sample_dataset.tar) released.

- **`[2024/12/30]`** AgiBot World Alpha released.

# TODO List 📅

- [x] **AgiBot World Beta**: ~1,000,000 trajectories of high-quality robot data (expected release date: Q1 2025)

- [x] Complete language annotation of Alpha version (expected release data: Mid-January 2025)

- [ ] **AgiBot World Colosseum**:Comprehensive platform (expected release date: 2025)

- [ ] **2025 AgiBot World Challenge** (expected release date: 2025)

# Table of Contents

- [Key Features 🔑](#key-features-)

- [News 🌍](#news-)

- [TODO List 📅](#todo-list-)

- [Get started 🔥](#get-started-)

- [Download the Dataset](#download-the-dataset)

- [Dataset Structure](#dataset-structure)

- [Explanation of Proprioceptive State](#explanation-of-proprioceptive-state)

- [Dataset Preprocessing](#dataset-preprocessing)

- [License and Citation](#license-and-citation)

# Get started 🔥

## Download the Dataset

To download the full dataset, you can use the following code. If you encounter any issues, please refer to the official Hugging Face documentation.

```

# Make sure you have git-lfs installed (https://git-lfs.com)

git lfs install

# When prompted for a password, use an access token with write permissions.

# Generate one from your settings: https://huggingface.co/settings/tokens

git clone https://huggingface.co/datasets/agibot-world/AgiBotWorld-Alpha

# If you want to clone without large files - just their pointers

GIT_LFS_SKIP_SMUDGE=1 git clone https://huggingface.co/datasets/agibot-world/AgiBotWorld-Alpha

```

If you only want to download a specific task, such as `task_327`, you can use the following code.

```

# Make sure you have git-lfs installed (https://git-lfs.com)

git lfs install

# Initialize an empty Git repository

git init AgiBotWorld-Alpha

cd AgiBotWorld-Alpha

# Set the remote repository

git remote add origin https://huggingface.co/datasets/agibot-world/AgiBotWorld-Alpha

# Enable sparse-checkout

git sparse-checkout init

# Specify the folders and files

git sparse-checkout set observations/327 task_info/task_327.json scripts proprio_stats parameters

# Pull the data

git pull origin main

```

To facilitate the inspection of the dataset's internal structure and examples, we also provide a sample dataset, which is approximately 7 GB. Please refer to `sample_dataset.tar`.

## Dataset Preprocessing

Our project relies solely on the [lerobot library](https://github.com/huggingface/lerobot) (dataset `v2.0`), please follow their [installation instructions](https://github.com/huggingface/lerobot?tab=readme-ov-file#installation).

Here, we provide scripts for converting it to the lerobot format. **Note** that you need to replace `/path/to/agibotworld/alpha` and `/path/to/save/lerobot` with the actual path.

```

python scripts/convert_to_lerobot.py --src_path /path/to/agibotworld/alpha --task_id 352 --tgt_path /path/to/save/lerobot

```

We would like to express our gratitude to the developers of lerobot for their outstanding contributions to the open-source community.

## Dataset Structure

### Folder hierarchy

```

data

├── task_info

│ ├── task_327.json

│ ├── task_352.json

│ └── ...

├── observations

│ ├── 327 # This represents the task id.

│ │ ├── 648642 # This represents the episode id.

│ │ │ ├── depth # This is a folder containing depth information saved in PNG format.

│ │ │ ├── videos # This is a folder containing videos from all camera perspectives.

│ │ ├── 648649

│ │ │ └── ...

│ │ └── ...

│ ├── 352

│ │ ├── 648544

│ │ │ ├── depth

│ │ │ ├── videos

│ │ ├── 648564

│ │ │ └── ...

│ └── ...

├── parameters

│ ├── 327

│ │ ├── 648642

│ │ │ ├── camera

│ │ ├── 648649

│ │ │ └── camera

│ │ └── ...

│ └── 352

│ ├── 648544

│ │ ├── camera # This contains all the cameras' intrinsic and extrinsic parameters.

│ └── 648564

│ │ └── camera

| └── ...

├── proprio_stats

│ ├── 327[task_id]

│ │ ├── 648642[episode_id]

│ │ │ ├── proprio_stats.h5 # This file contains all the robot's proprioceptive information.

│ │ ├── 648649

│ │ │ └── proprio_stats.h5

│ │ └── ...

│ ├── 352[task_id]

│ │ ├── 648544[episode_id]

│ │ │ ├── proprio_stats.h5

│ │ └── 648564

│ │ └── proprio_stats.h5

│ └── ...

```

### json file format

In the `task_[id].json` file, we store the basic information of every episode along with the language instructions. Here, we will further explain several specific keywords.

- **action_config**: The content corresponding to this key is a list composed of all **action slices** from the episode. Each action slice includes a start and end time, the corresponding atomic skill, and the language instruction.

- **key_frame**: The content corresponding to this key consists of annotations for keyframes, including the start and end times of the keyframes and detailed descriptions.

```

[ {"episode_id": 649078,

"task_id": 327,

"task_name": "Picking items in Supermarket",

"init_scene_text": "The robot is in front of the fruit shelf in the supermarket.",

"lable_info":{

"action_config":[

{"start_frame": 0,

"end_frame": 435,

"action_text": "Pick up onion from the shelf."

"skill": "Pick"

},

{"start_frame": 435,

"end_frame": 619,

"action_text": "Place onion into the plastic bag in the shopping cart."

"skill": "Place"

},

...

]

},

...

]

```

### h5 file format

In the `proprio_stats.h5` file, we store all the robot's proprioceptive data. For more detailed information, please refer to the [explanation of proprioceptive state](#explanation-of-proprioceptive-state).

```

|-- timestamp

|-- state

|-- effector

|-- force

|-- position

|-- end

|-- angular

|-- orientation

|-- position

|-- velocity

|-- wrench

|-- head

|-- effort

|-- position

|-- velocity

|-- joint

|-- current_value

|-- effort

|-- position

|-- velocity

|-- robot

|-- orientation

|-- orientation_drift

|-- position

|-- position_drift

|-- waist

|-- effort

|-- position

|-- velocity

|-- action

|-- effector

|-- force

|-- index

|-- position

|-- end

|-- orientation

|-- position

|-- head

|-- effort

|-- position

|-- velocity

|-- joint

|-- effort

|-- index

|-- position

|-- velocity

|-- robot

|-- index

|-- orientation

|-- position

|-- velocity

|-- waist

|-- effort

|-- position

|-- velocity

```

## Explanation of Proprioceptive State

### Terminology

*The definitions and data ranges in this section may change with software and hardware version. Stay tuned.*

**State and action**

1. State

State refers to the monitoring information of different sensors and actuators.

2. Action

Action refers to the instructions sent to the hardware abstraction layer, where controller would respond to these instructions. Therefore, there is a difference between the issued instructions and the actual executed state.

**Actuators**

1. ***Effector:*** refers to the end effector, for example dexterous hands or grippers.

2. ***End:*** refers to the 6DoF end pose on the robot flange.

3. ***Head:*** refers to the robot's head perspective,which has two degrees of freedom (pitch and yaw).

4. ***Joint:*** refers to the joints of the robot's dual arms, with 14 degrees of freedom (7 DoF each).

5. ***Robot:*** refers to the robot's pose in its surrouding environment. The orientation and position refer to the robot's relative pose in the odometry coordinate system, where the origin is set since the robot is powered on.

6. ***Waist:*** refers to the joints of the robot's waist, which has two degrees of freedom (pitch and lift).

### Common fields

1. Position: Spatial position, encoder position, angle, etc.

2. Velocity: Speed

3. Angular: Angular velocity

4. Effort: Torque of the motor. Not available for now.

5. Wrench: Six-dimensional force, force in the xyz directions, and torque. Not available for now.

### Value shapes and ranges

| Group | Shape | Meaning |

| --- | :---- | :---- |

| /timestamp | [N] | timestamp in nanoseconds |

| /state/effector/position (gripper) | [N, 2] | left `[:, 0]`, right `[:, 1]`, gripper open range in mm |

| /state/effector/position (dexhand) | [N, 12] | left `[:, :6]`, right `[:, 6:]`, joint angle in rad |

| /state/end/orientation | [N, 2, 4] | left `[:, 0, :]`, right `[:, 1, :]`, flange quaternion with xyzw |

| /state/end/position | [N, 2, 3] | left `[:, 0, :]`, right `[:, 1, :]`, flange xyz in meters |

| /state/head/position | [N, 2] | yaw `[:, 0]`, pitch `[:, 1]`, rad |

| /state/joint/current_value | [N, 14] | left arm `[:, :7]`, right arm `[:, 7:]` |

| /state/joint/position | [N, 14] | left arm `[:, :7]`, right arm `[:, 7:]`, rad |

| /state/robot/orientation | [N, 4] | quaternion in xyzw, yaw only |

| /state/robot/position | [N, 3] | xyz position, where z is always 0 in meters |

| /state/waist/position | [N, 2] | pitch `[:, 0]` in rad, lift `[:, 1]`in meters |

| /action/*/index | [M] | actions indexes refer to when the control source is actually sending signals |

| /action/effector/position (gripper) | [N, 2] | left `[:, 0]`, right `[:, 1]`, 0 for full open and 1 for full close |

| /action/effector/position (dexhand) | [N, 12] | same as /state/effector/position

| /action/effector/index | [M_1] | index when the control source for end effector is sending control signals |

| /action/end/orientation | [N, 2, 4] | same as /state/end/orientation |

| /action/end/position | [N, 2, 3] | same as /state/end/position |

| /action/end/index | [M_2] | same as other indexes |

| /action/head/position | [N, 2] | same as /state/head/position |

| /action/head/index | [M_3] | same as other indexes |

| /action/joint/position | [N, 14] | same as /state/joint/position |

| /action/joint/index | [M_4] | same as other indexes |

| /action/robot/velocity | [N, 2] | vel along x axis `[:, 0]`, yaw rate `[:, 1]` |

| /action/robot/index | [M_5] | same as other indexes |

| /action/waist/position | [N, 2] | same as /state/waist/position |

| /action/waist/index | [M_6] | same as other indexes |

# License and Citation

All the data and code within this repo are under [CC BY-NC-SA 4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/). Please consider citing our project if it helps your research.

```BibTeX

@misc{contributors2024agibotworldrepo,

title={AgiBot World Colosseum},

author={AgiBot World Colosseum contributors},

howpublished={\url{https://github.com/OpenDriveLab/AgiBot-World}},

year={2024}

}

```

提供机构:

maas

创建时间:

2024-12-31

搜集汇总

数据集介绍

背景与挑战

背景概述

AgiBotWorld-Alpha是一个专注于真实世界机器人操作的大规模数据集,特别针对双臂机器人系统。它包含超过10万条轨迹,总时长约595小时,覆盖100多个真实场景和5个目标领域,涉及接触式操作、长时程规划和多机器人协作等复杂任务。数据集经过更新优化,移除了有帧丢失问题的数据,增加了交互物体,并进行了匿名化和压缩处理,以提高数据质量和隐私保护。

以上内容由遇见数据集搜集并总结生成