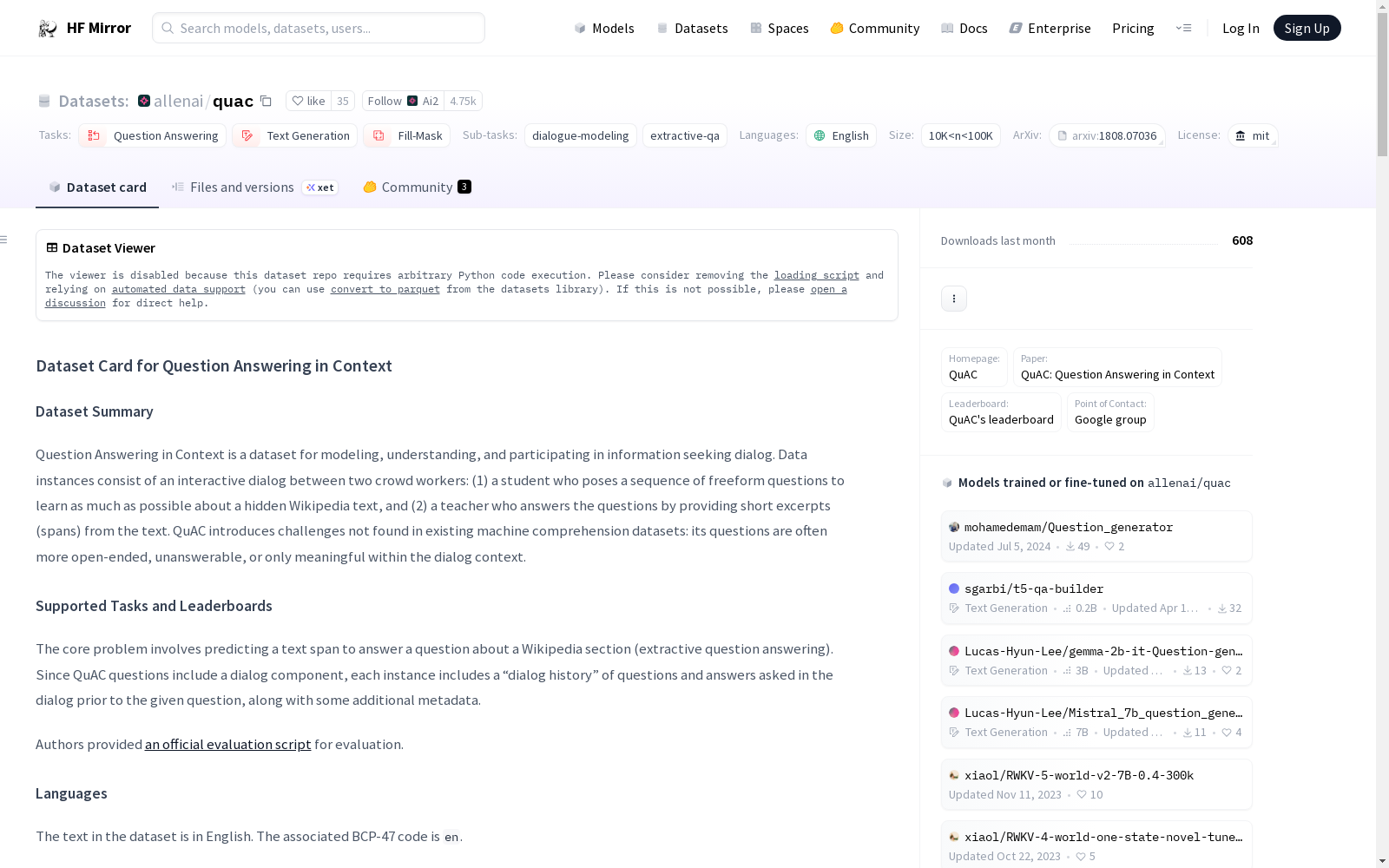

allenai/quac

收藏数据集概述

名称: Question Answering in Context (QuAC)

语言: 英语 (en)

许可证: MIT

多语言性: 单语种

大小: 10K<n<100K

来源数据集: 扩展自Wikipedia

任务类别:

- 问答

- 文本生成

- 填空

任务ID:

- 对话建模

- 抽取式问答

论文代码ID: quac

美观名称: Question Answering in Context

数据集结构

数据实例

数据实例包括对话ID、Wikipedia页面标题、背景信息、章节标题、上下文、对话轮次ID、问题、跟进动作、是/否回答以及答案。

数据字段

dialogue_id: 对话IDwikipedia_page_title: Wikipedia页面标题background: 主要Wikipedia文章的第一段section_title: Wikipedia章节标题context: Wikipedia章节文本turn_ids: 对话轮次ID列表questions: 对话中的问题列表followups: 对话中的跟进动作列表yesnos: 对话中的是/否回答列表answers: 问题答案字典,包括答案开始位置和文本orig_answers: 原始答案字典,包括答案开始位置和文本

数据分割

- 训练集: 包含83,568个问题(11,567个对话)

- 验证集: 包含7,354个问题(1,000个对话)

- 测试集: 包含7,353个问题(1,002个对话)

数据集创建

许可证信息

数据集遵循MIT许可证。

引用信息

@inproceedings{choi-etal-2018-quac, title = "{Q}u{AC}: Question Answering in Context", author = "Choi, Eunsol and He, He and Iyyer, Mohit and Yatskar, Mark and Yih, Wen-tau and Choi, Yejin and Liang, Percy and Zettlemoyer, Luke", booktitle = "Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing", month = oct # "-" # nov, year = "2018", address = "Brussels, Belgium", publisher = "Association for Computational Linguistics", url = "https://www.aclweb.org/anthology/D18-1241", doi = "10.18653/v1/D18-1241", pages = "2174--2184", abstract = "We present QuAC, a dataset for Question Answering in Context that contains 14K information-seeking QA dialogs (100K questions in total). The dialogs involve two crowd workers: (1) a student who poses a sequence of freeform questions to learn as much as possible about a hidden Wikipedia text, and (2) a teacher who answers the questions by providing short excerpts from the text. QuAC introduces challenges not found in existing machine comprehension datasets: its questions are often more open-ended, unanswerable, or only meaningful within the dialog context, as we show in a detailed qualitative evaluation. We also report results for a number of reference models, including a recently state-of-the-art reading comprehension architecture extended to model dialog context. Our best model underperforms humans by 20 F1, suggesting that there is significant room for future work on this data. Dataset, baseline, and leaderboard available at url{http://quac.ai}.", }