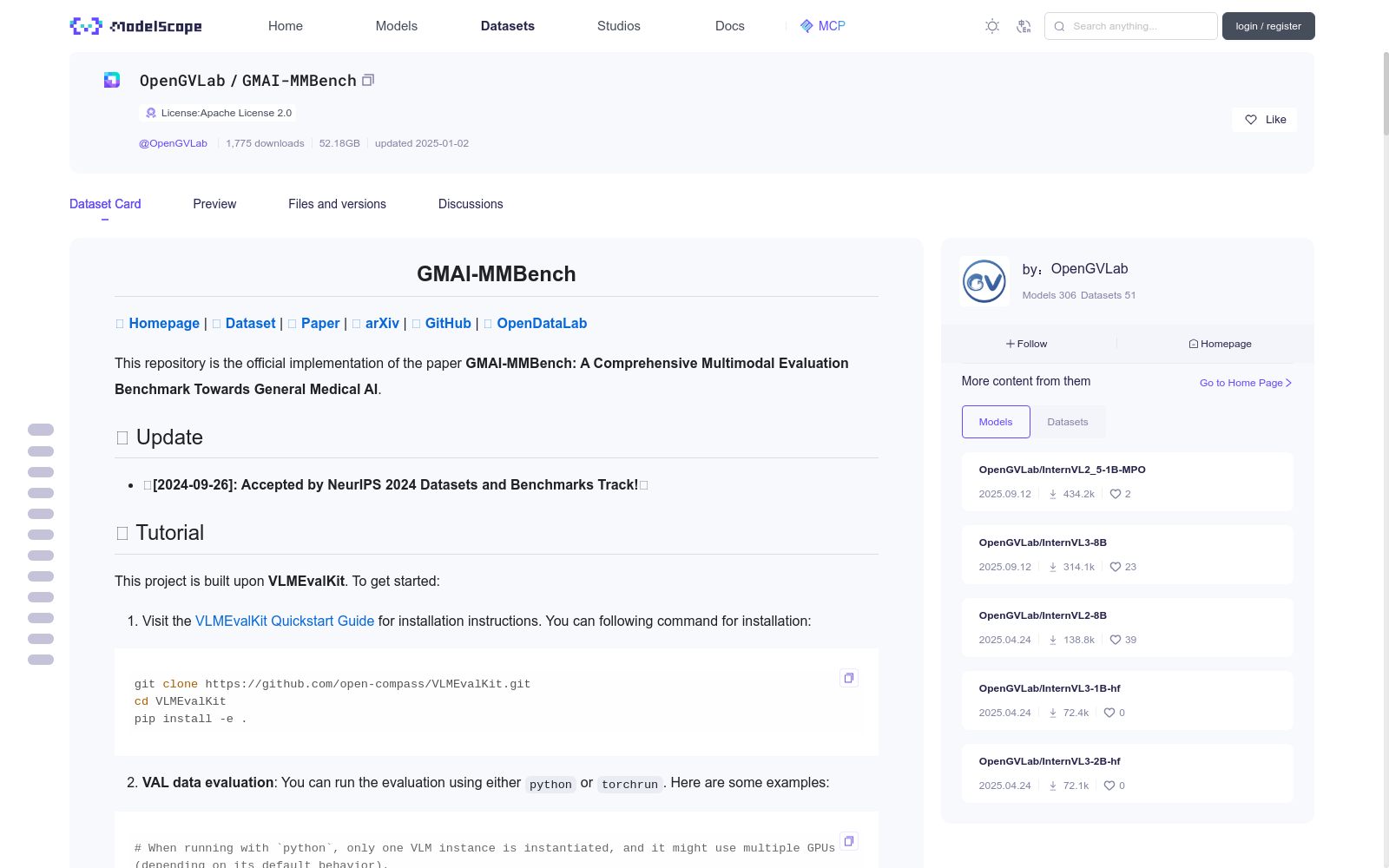

GMAI-MMBench

收藏魔搭社区2026-01-09 更新2025-01-04 收录

下载链接:

https://modelscope.cn/datasets/OpenGVLab/GMAI-MMBench

下载链接

链接失效反馈资源简介:

# <div align="center"><b> GMAI-MMBench </b></div>

[🍎 **Homepage**](https://uni-medical.github.io/GMAI-MMBench.github.io/#2023xtuner) | [**🤗 Dataset**](https://huggingface.co/datasets/myuniverse/GMAI-MMBench) | [**🤗 Paper**](https://huggingface.co/papers/2408.03361) | [**📖 arXiv**]() | [**🐙 GitHub**](https://github.com/uni-medical/GMAI-MMBench) | [**🌐 OpenDataLab**](https://opendatalab.com/GMAI/MMBench)

This repository is the official implementation of the paper **GMAI-MMBench: A Comprehensive Multimodal Evaluation Benchmark Towards General Medical AI**.

## 🌈 Update

- **🚀[2024-09-26]: Accepted by NeurIPS 2024 Datasets and Benchmarks Track!🌟**

## 🚗 Tutorial

This project is built upon **VLMEvalKit**. To get started:

1. Visit the [VLMEvalKit Quickstart Guide](https://github.com/open-compass/VLMEvalKit/blob/main/docs/en/get_started/Quickstart.md) for installation instructions. You can following command for installation:

```bash

git clone https://github.com/open-compass/VLMEvalKit.git

cd VLMEvalKit

pip install -e .

```

2. **VAL data evaluation**: You can run the evaluation using either `python` or `torchrun`. Here are some examples:

```bash

# When running with `python`, only one VLM instance is instantiated, and it might use multiple GPUs (depending on its default behavior).

# That is recommended for evaluating very large VLMs (like IDEFICS-80B-Instruct).

# IDEFICS-80B-Instruct on GMAI-MMBench_VAL, Inference and Evalution

python run.py --data GMAI-MMBench_VAL --model idefics_80b_instruct --verbose

# IDEFICS-80B-Instruct on GMAI-MMBench_VAL, Inference only

python run.py --data GMAI-MMBench_VAL --model idefics_80b_instruct --verbose --mode infer

# When running with `torchrun`, one VLM instance is instantiated on each GPU. It can speed up the inference.

# However, that is only suitable for VLMs that consume small amounts of GPU memory.

# IDEFICS-9B-Instruct, Qwen-VL-Chat, mPLUG-Owl2 on GMAI-MMBench_VAL. On a node with 8 GPU. Inference and Evaluation.

torchrun --nproc-per-node=8 run.py --data GMAI-MMBench_VAL --model idefics_80b_instruct qwen_chat mPLUG-Owl2 --verbose

# Qwen-VL-Chat on GMAI-MMBench_VAL. On a node with 2 GPU. Inference and Evaluation.

torchrun --nproc-per-node=2 run.py --data GMAI-MMBench_VAL --model qwen_chat --verbose

```

The evaluation results will be printed as logs, besides. **Result Files** will also be generated in the directory `$YOUR_WORKING_DIRECTORY/{model_name}`. Files ending with `.csv` contain the evaluated metrics.

**TEST data evaluation**

```bash

# When running with `python`, only one VLM instance is instantiated, and it might use multiple GPUs (depending on its default behavior).

# That is recommended for evaluating very large VLMs (like IDEFICS-80B-Instruct).

# IDEFICS-80B-Instruct on GMAI-MMBench_VAL, Inference and Evalution

python run.py --data GMAI-MMBench_TEST --model idefics_80b_instruct --verbose

# IDEFICS-80B-Instruct on GMAI-MMBench_VAL, Inference only

python run.py --data GMAI-MMBench_TEST --model idefics_80b_instruct --verbose --mode infer

# When running with `torchrun`, one VLM instance is instantiated on each GPU. It can speed up the inference.

# However, that is only suitable for VLMs that consume small amounts of GPU memory.

# IDEFICS-9B-Instruct, Qwen-VL-Chat, mPLUG-Owl2 on GMAI-MMBench_VAL. On a node with 8 GPU. Inference and Evaluation.

torchrun --nproc-per-node=8 run.py --data GMAI-MMBench_TEST --model idefics_80b_instruct qwen_chat mPLUG-Owl2 --verbose

# Qwen-VL-Chat on GMAI-MMBench_VAL. On a node with 2 GPU. Inference and Evaluation.

torchrun --nproc-per-node=2 run.py --data GMAI-MMBench_TEST --model qwen_chat --verbose

```

Due to the test data not having the answer available, an error will occur after running. This error indicates that VLMEvalKit cannot retrieve the answer during the final result matching stage.

You can access the generated intermediate results from VLMEvalKit/outputs/\<MODEL\>. This is the content of the intermediate result Excel file, where the model's predictions are listed under "prediction."

You will then need to send this Excel file via email to guoanwang971@gmail.com. The email must include the following information: \<Model Name\>, \<Team Name\>, and \<arxiv paper link\>. We will calculate the accuracy of your model using the answer key and periodically update the leaderboard.

3. You can find more details on https://github.com/open-compass/VLMEvalKit/blob/main/vlmeval/dataset/image_mcq.py.

## To render an image into visualization.

To facilitate users in testing benchmarks with VLMEvalKit, we have stored our data directly in TSV format, requiring no additional operations to use our benchmark seamlessly with this tool. To prevent data leakage, we have included an "answer" column in the VAL data, while removing the "answer" column from the Test data.

For the "image" column, we have used Base64 encoding (to comply with [VLMEvalKit](https://github.com/open-compass/VLMEvalKit)'s requirements). The encryption code is as follows:

```python

image = cv2.imread(image_path, cv2.IMREAD_COLOR)

encoded_image = encode_image_to_base64(image)

def encode_image_to_base64(image):

"""Convert image to base64 string."""

_, buffer = cv2.imencode('.png', image)

return base64.b64encode(buffer).decode()

```

The code for converting the Base64 format back into an image can be referenced from the official [VLMEvalKit](https://github.com/open-compass/VLMEvalKit):

```python

def decode_base64_to_image(base64_string, target_size=-1):

image_data = base64.b64decode(base64_string)

image = Image.open(io.BytesIO(image_data))

if image.mode in ('RGBA', 'P'):

image = image.convert('RGB')

if target_size > 0:

image.thumbnail((target_size, target_size))

return image

```

If needed, below is the official code provided by [VLMEvalKit](https://github.com/open-compass/VLMEvalKit) for converting an image to Base64 encoding:

```python

def encode_image_to_base64(img, target_size=-1):

# if target_size == -1, will not do resizing

# else, will set the max_size ot (target_size, target_size)

if img.mode in ('RGBA', 'P'):

img = img.convert('RGB')

if target_size > 0:

img.thumbnail((target_size, target_size))

img_buffer = io.BytesIO()

img.save(img_buffer, format='JPEG')

image_data = img_buffer.getvalue()

ret = base64.b64encode(image_data).decode('utf-8')

return ret

def encode_image_file_to_base64(image_path, target_size=-1):

image = Image.open(image_path)

return encode_image_to_base64(image, target_size=target_size)

```

## Benchmark Details

We introduce GMAI-MMBench: the most comprehensive general medical AI benchmark with well-categorized data structure and multi-perceptual granularity to date. It is constructed from **284 datasets** across **38 medical image modalities**, **18 clinical-related tasks**, **18 departments**, and **4 perceptual granularities** in a Visual Question Answering (VQA) format. Additionally, we implemented a **lexical tree** structure that allows users to customize evaluation tasks, accommodating various assessment needs and substantially supporting medical AI research and applications. We evaluated 50 LVLMs, and the results show that even the advanced GPT-4o only achieves an accuracy of 52\%, indicating significant room for improvement. We believe GMAI-MMBench will stimulate the community to build the next generation of LVLMs toward GMAI.

## Benchmark Creation

GMAI-MMBench is constructed from 284 datasets across 38 medical image modalities. These datasets are derived from the public (268) and several hospitals (16) that have agreed to share their ethically approved data. The data collection can be divided into three main steps:

1) We search hundreds of datasets from both the public and hospitals, then keep 284 datasets with highly qualified labels after dataset filtering, uniforming image format, and standardizing label expression.

2) We categorize all labels into 18 clinical VQA tasks and 18 clinical departments, then export a lexical tree for easily customized evaluation.

3) We generate QA pairs for each label from its corresponding question and option pool. Each question must include information about image modality, task cue, and corresponding annotation granularity.

The final benchmark is obtained through additional validation and manual selection.

## Lexical Tree

In this work, to make the GMAI-MMBench more intuitive and user-friendly, we have systematized our labels and structured the entire dataset into a lexical tree. Users can freely select the test contents based on this lexical tree. We believe that this customizable benchmark will effectively guide the improvement of models in specific areas.

You can see the complete lexical tree at [**🍎 Homepage**](https://uni-medical.github.io/GMAI-MMBench.github.io/#2023xtuner).

## Evaluation

An example of how to use the Lexical Tree for customizing evaluations. The process involves selecting the department (ophthalmology), choosing the modality (fundus photography), filtering questions using relevant keywords, and evaluating different models based on their accuracy in answering the filtered questions.

## 🏆 Leaderboard

| Rank | Model Name | Val | Test |

|:----:|:-------------------------:|:-----:|:-----:|

| | Random | 25.70 | 25.94 |

| 1 | GPT-4o | 53.53 | 53.96 |

| 2 | Gemini 1.5 | 47.42 | 48.36 |

| 3 | Gemini 1.0 | 44.38 | 44.93 |

| 4 | GPT-4V | 42.50 | 44.08 |

| 5 | MedDr | 41.95 | 43.69 |

| 6 | MiniCPM-V2 | 41.79 | 42.54 |

| 7 | DeepSeek-VL-7B | 41.73 | 43.43 |

| 8 | Qwen-VL-Max | 41.34 | 42.16 |

| 9 | LLAVA-InternLM2-7b | 40.07 | 40.45 |

| 10 | InternVL-Chat-V1.5 | 38.86 | 39.73 |

| 11 | TransCore-M | 38.86 | 38.70 |

| 12 | XComposer2 | 38.68 | 39.20 |

| 13 | LLAVA-V1.5-7B | 38.23 | 37.96 |

| 14 | OmniLMM-12B | 37.89 | 39.30 |

| 15 | Emu2-Chat | 36.50 | 37.59 |

| 16 | mPLUG-Owl2 | 35.62 | 36.21 |

| 17 | CogVLM-Chat | 35.23 | 36.08 |

| 18 | Qwen-VL-Chat | 35.07 | 36.96 |

| 19 | Yi-VL-6B | 34.82 | 34.31 |

| 20 | Claude3-Opus | 32.37 | 32.44 |

| 21 | MMAlaya | 32.19 | 32.30 |

| 22 | Mini-Gemini-7B | 32.17 | 31.09 |

| 23 | InstructBLIP-7B | 31.80 | 30.95 |

| 24 | Idelecs-9B-Instruct | 29.74 | 31.13 |

| 25 | VisualGLM-6B | 29.58 | 30.45 |

| 26 | RadFM | 22.95 | 22.93 |

| 27 | Qilin-Med-VL-Chat | 22.34 | 22.06 |

| 28 | LLaVA-Med | 20.54 | 19.60 |

| 29 | Med-Flamingo | 12.74 | 11.64 |

## Disclaimers

The guidelines for the annotators emphasized strict compliance with copyright and licensing rules from the initial data source, specifically avoiding materials from websites that forbid copying and redistribution.

Should you encounter any data samples potentially breaching the copyright or licensing regulations of any site, we encourage you to contact us. Upon verification, such samples will be promptly removed.

## Contact

- Jin Ye: jin.ye@monash.edu

- Junjun He: hejunjun@pjlab.org.cn

- Qiao Yu: qiaoyu@pjlab.org.cn

## Citation

**BibTeX:**

```bibtex

@misc{chen2024gmaimmbenchcomprehensivemultimodalevaluation,

title={GMAI-MMBench: A Comprehensive Multimodal Evaluation Benchmark Towards General Medical AI},

author={Pengcheng Chen and Jin Ye and Guoan Wang and Yanjun Li and Zhongying Deng and Wei Li and Tianbin Li and Haodong Duan and Ziyan Huang and Yanzhou Su and Benyou Wang and Shaoting Zhang and Bin Fu and Jianfei Cai and Bohan Zhuang and Eric J Seibel and Junjun He and Yu Qiao},

year={2024},

eprint={2408.03361},

archivePrefix={arXiv},

primaryClass={eess.IV},

url={https://arxiv.org/abs/2408.03361},

}

```

<div align="center"><b> GMAI-MMBench </b></div>

[🍎 **主页**](https://uni-medical.github.io/GMAI-MMBench.github.io/#2023xtuner) | [**🤗 数据集**](https://huggingface.co/datasets/myuniverse/GMAI-MMBench) | [**🤗 论文**](https://huggingface.co/papers/2408.03361) | [**📖 arXiv**]() | [**🐙 GitHub**](https://github.com/uni-medical/GMAI-MMBench) | [**🌐 OpenDataLab**](https://opendatalab.com/GMAI/MMBench)

本仓库为论文**GMAI-MMBench:面向通用医学人工智能的全方位多模态评测基准**的官方实现代码库。

## 🌈 更新

- **🚀[2024-09-26]: 已被NeurIPS 2024 数据集与基准赛道收录!🌟**

## 🚗 教程

本项目基于**VLMEvalKit**构建。快速开始如下:

1. 访问[VLMEvalKit快速入门指南](https://github.com/open-compass/VLMEvalKit/blob/main/docs/en/get_started/Quickstart.md)获取安装说明。你可以通过以下命令完成安装:

bash

git clone https://github.com/open-compass/VLMEvalKit.git

cd VLMEvalKit

pip install -e .

2. **验证集(VAL)评测**:你可以通过`python`或`torchrun`运行评测。以下是示例:

bash

# 当使用`python`运行时,仅会实例化一个大语言视觉模型(Large Vision Language Model, LVLM)实例,其可能会根据默认行为使用多张GPU。

# 该方式推荐用于评测超大型LVLMs(如IDEFICS-80B-Instruct)。

# 在GMAI-MMBench_VAL上运行IDEFICS-80B-Instruct,包含推理与评测

python run.py --data GMAI-MMBench_VAL --model idefics_80b_instruct --verbose

# 在GMAI-MMBench_VAL上运行IDEFICS-80B-Instruct,仅推理

python run.py --data GMAI-MMBench_VAL --model idefics_80b_instruct --verbose --mode infer

# 当使用`torchrun`运行时,每张GPU上会实例化一个LVLM实例,可加速推理过程。

# 不过该方式仅适用于显存占用较低的LVLMs。

# 在8GPU节点上的GMAI-MMBench_VAL上运行IDEFICS-9B-Instruct、Qwen-VL-Chat、mPLUG-Owl2,包含推理与评测

torchrun --nproc-per-node=8 run.py --data GMAI-MMBench_VAL --model idefics_80b_instruct qwen_chat mPLUG-Owl2 --verbose

# 在2GPU节点上的GMAI-MMBench_VAL上运行Qwen-VL-Chat,包含推理与评测

torchrun --nproc-per-node=2 run.py --data GMAI-MMBench_VAL --model qwen_chat --verbose

评测结果会以日志形式打印,此外,**结果文件**会生成在`$YOUR_WORKING_DIRECTORY/{model_name}`目录下。以`.csv`结尾的文件包含评测指标。

**测试集(TEST)评测**

bash

# 当使用`python`运行时,仅会实例化一个LVLM实例,其可能会根据默认行为使用多张GPU。

# 该方式推荐用于评测超大型LVLMs(如IDEFICS-80B-Instruct)。

# 在GMAI-MMBench_TEST上运行IDEFICS-80B-Instruct,包含推理与评测

python run.py --data GMAI-MMBench_TEST --model idefics_80b_instruct --verbose

# 在GMAI-MMBench_TEST上运行IDEFICS-80B-Instruct,仅推理

python run.py --data GMAI-MMBench_TEST --model idefics_80b_instruct --verbose --mode infer

# 当使用`torchrun`运行时,每张GPU上会实例化一个LVLM实例,可加速推理过程。

# 不过该方式仅适用于显存占用较低的LVLMs。

# 在8GPU节点上的GMAI-MMBench_TEST上运行IDEFICS-9B-Instruct、Qwen-VL-Chat、mPLUG-Owl2,包含推理与评测

torchrun --nproc-per-node=8 run.py --data GMAI-MMBench_TEST --model idefics_80b_instruct qwen_chat mPLUG-Owl2 --verbose

# 在2GPU节点上的GMAI-MMBench_TEST上运行Qwen-VL-Chat,包含推理与评测

torchrun --nproc-per-node=2 run.py --data GMAI-MMBench_TEST --model qwen_chat --verbose

由于测试集未提供标准答案,运行后会出现报错。该报错表示VLMEvalKit在最终结果匹配阶段无法获取标准答案。

你可以从`VLMEvalKit/outputs/<MODEL>`路径获取生成的中间结果。该中间结果Excel文件中,模型预测结果会列在“prediction”字段下。

你需要将该Excel文件通过邮件发送至guoanwang971@gmail.com,邮件中必须包含以下信息:<模型名称>、<团队名称>以及<arXiv论文链接>。我们将使用标准答案计算您的模型准确率,并定期更新排行榜。

3. 你可以在https://github.com/open-compass/VLMEvalKit/blob/main/vlmeval/dataset/image_mcq.py 查看更多细节。

## 可视化图像渲染说明

为便于用户使用VLMEvalKit测试基准,我们将数据以TSV格式存储,无需额外操作即可无缝适配该工具。为防止数据泄露,我们在验证集(VAL)中保留了“答案”列,而在测试集(TEST)中移除了该列。对于“图像”列,我们采用Base64编码(以符合VLMEvalKit的要求)。编码代码如下:

python

image = cv2.imread(image_path, cv2.IMREAD_COLOR)

encoded_image = encode_image_to_base64(image)

def encode_image_to_base64(image):

"""Convert image to base64 string."""

_, buffer = cv2.imencode('.png', image)

return base64.b64encode(buffer).decode()

将Base64格式转换回图像的代码可参考VLMEvalKit官方代码:

python

def decode_base64_to_image(base64_string, target_size=-1):

image_data = base64.b64decode(base64_string)

image = Image.open(io.BytesIO(image_data))

if image.mode in ('RGBA', 'P'):

image = image.convert('RGB')

if target_size > 0:

image.thumbnail((target_size, target_size))

return image

如需更多信息,以下为VLMEvalKit官方提供的图像转Base64编码代码:

python

def encode_image_to_base64(img, target_size=-1):

# if target_size == -1, will not do resizing

# else, will set the max_size ot (target_size, target_size)

if img.mode in ('RGBA', 'P'):

img = img.convert('RGB')

if target_size > 0:

img.thumbnail((target_size, target_size))

img_buffer = io.BytesIO()

img.save(img_buffer, format='JPEG')

image_data = img_buffer.getvalue()

ret = base64.b64encode(image_data).decode('utf-8')

return ret

def encode_image_file_to_base64(image_path, target_size=-1):

image = Image.open(image_path)

return encode_image_to_base64(image, target_size=target_size)

## 基准详情

我们推出GMAI-MMBench:这是目前最全面的通用医学AI评测基准,具备清晰的分类数据结构与多感知粒度。该基准由**284个数据集**构建而成,涵盖**38种医学影像模态**、**18项临床相关任务**、**18个临床科室**以及**4种感知粒度**,采用视觉问答(Visual Question Answering, VQA)格式。此外,我们实现了**词法树**结构,允许用户自定义评测任务,适配各类评估需求,可有力支撑医学AI研究与应用。我们评测了50个大语言视觉模型(Large Vision Language Model, LVLM),结果显示即便先进的GPT-4o准确率仅为52%,这表明该领域仍有巨大的提升空间。我们相信GMAI-MMBench将推动社区构建面向通用医学人工智能(General Medical AI, GMAI)的下一代大语言视觉模型。

## 基准构建流程

GMAI-MMBench由来自公共数据源(268个)与16家同意共享伦理获批数据的医院的284个数据集构建而成。数据收集主要分为三个步骤:

1) 我们从公共数据源与医院中检索了数百个数据集,经过数据集筛选、统一图像格式与标准化标签表达后,保留了284个具备高质量标注的数据集。

2) 我们将所有标注划分为18项临床VQA任务与18个临床科室,并导出词法树以实现便捷的自定义评测。

3) 我们为每个标注从对应的问题与选项池中生成问答对。每个问题必须包含影像模态信息、任务提示与对应的标注粒度。

最终基准经过额外验证与人工筛选后确定。

## 词法树

为使GMAI-MMBench更直观易用,我们将所有标注系统化,并将整个数据集构建为词法树结构。用户可基于该词法树自由选择测试内容。我们相信这种可自定义的评测基准将有效指导模型在特定领域的性能提升。

你可以在[🍎 主页](https://uni-medical.github.io/GMAI-MMBench.github.io/#2023xtuner)查看完整词法树。

## 评测示例

以下为使用词法树进行自定义评测的示例流程:选择科室(眼科)、选择模态(眼底摄影)、使用相关关键词筛选问题,并基于筛选后问题的回答准确率评测不同模型。

## 🏆 排行榜

| 排名 | 模型名称 | 验证集准确率 | 测试集准确率 |

|:----:|:-------------------------:|:-----:|:-----:|

| | 随机猜测 | 25.70 | 25.94 |

| 1 | GPT-4o | 53.53 | 53.96 |

| 2 | Gemini 1.5 | 47.42 | 48.36 |

| 3 | Gemini 1.0 | 44.38 | 44.93 |

| 4 | GPT-4V | 42.50 | 44.08 |

| 5 | MedDr | 41.95 | 43.69 |

| 6 | MiniCPM-V2 | 41.79 | 42.54 |

| 7 | DeepSeek-VL-7B | 41.73 | 43.43 |

| 8 | Qwen-VL-Max | 41.34 | 42.16 |

| 9 | LLAVA-InternLM2-7b | 40.07 | 40.45 |

| 10 | InternVL-Chat-V1.5 | 38.86 | 39.73 |

| 11 | TransCore-M | 38.86 | 38.70 |

| 12 | XComposer2 | 38.68 | 39.20 |

| 13 | LLAVA-V1.5-7B | 38.23 | 37.96 |

| 14 | OmniLMM-12B | 37.89 | 39.30 |

| 15 | Emu2-Chat | 36.50 | 37.59 |

| 16 | mPLUG-Owl2 | 35.62 | 36.21 |

| 17 | CogVLM-Chat | 35.23 | 36.08 |

| 18 | Qwen-VL-Chat | 35.07 | 36.96 |

| 19 | Yi-VL-6B | 34.82 | 34.31 |

| 20 | Claude3-Opus | 32.37 | 32.44 |

| 21 | MMAlaya | 32.19 | 32.30 |

| 22 | Mini-Gemini-7B | 32.17 | 31.09 |

| 23 | InstructBLIP-7B | 31.80 | 30.95 |

| 24 | Idelecs-9B-Instruct | 29.74 | 31.13 |

| 25 | VisualGLM-6B | 29.58 | 30.45 |

| 26 | RadFM | 22.95 | 22.93 |

| 27 | Qilin-Med-VL-Chat | 22.34 | 22.06 |

| 28 | LLaVA-Med | 20.54 | 19.60 |

| 29 | Med-Flamingo | 12.74 | 11.64 |

## 免责声明

标注指南强调需严格遵守原始数据源的版权与许可规则,尤其需规避来自禁止复制与再分发的网站的素材。若您发现任何可能侵犯任何网站版权或许可法规的数据样本,欢迎联系我们。经核实后,此类样本将被立即移除。

## 联系方式

- 叶进:jin.ye@monash.edu

- 何俊骏:hejunjun@pjlab.org.cn

- 乔宇:qiaoyu@pjlab.org.cn

## 引用

**BibTeX:**

bibtex

@misc{chen2024gmaimmbenchcomprehensivemultimodalevaluation,

title={GMAI-MMBench: A Comprehensive Multimodal Evaluation Benchmark Towards General Medical AI},

author={Pengcheng Chen and Jin Ye and Guoan Wang and Yanjun Li and Zhongying Deng and Wei Li and Tianbin Li and Haodong Duan and Ziyan Huang and Yanzhou Su and Benyou Wang and Shaoting Zhang and Bin Fu and Jianfei Cai and Bohan Zhuang and Eric J Seibel and Junjun He and Yu Qiao},

year={2024},

eprint={2408.03361},

archivePrefix={arXiv},

primaryClass={eess.IV},

url={https://arxiv.org/abs/2408.03361},

}

提供机构:

maas

创建时间:

2024-12-27

搜集汇总

数据集介绍

背景与挑战

背景概述

GMAI-MMBench是一个全面的多模态评估基准,旨在推动通用医疗AI的发展。它从284个数据集中构建,涵盖38种医学图像模态、18个临床任务和18个科室,以视觉问答格式组织,并采用词汇树结构支持自定义评估。该基准已评估50个大型视觉语言模型,最高准确率仅53.96%,揭示了医疗AI领域的显著改进空间。

以上内容由遇见数据集搜集并总结生成