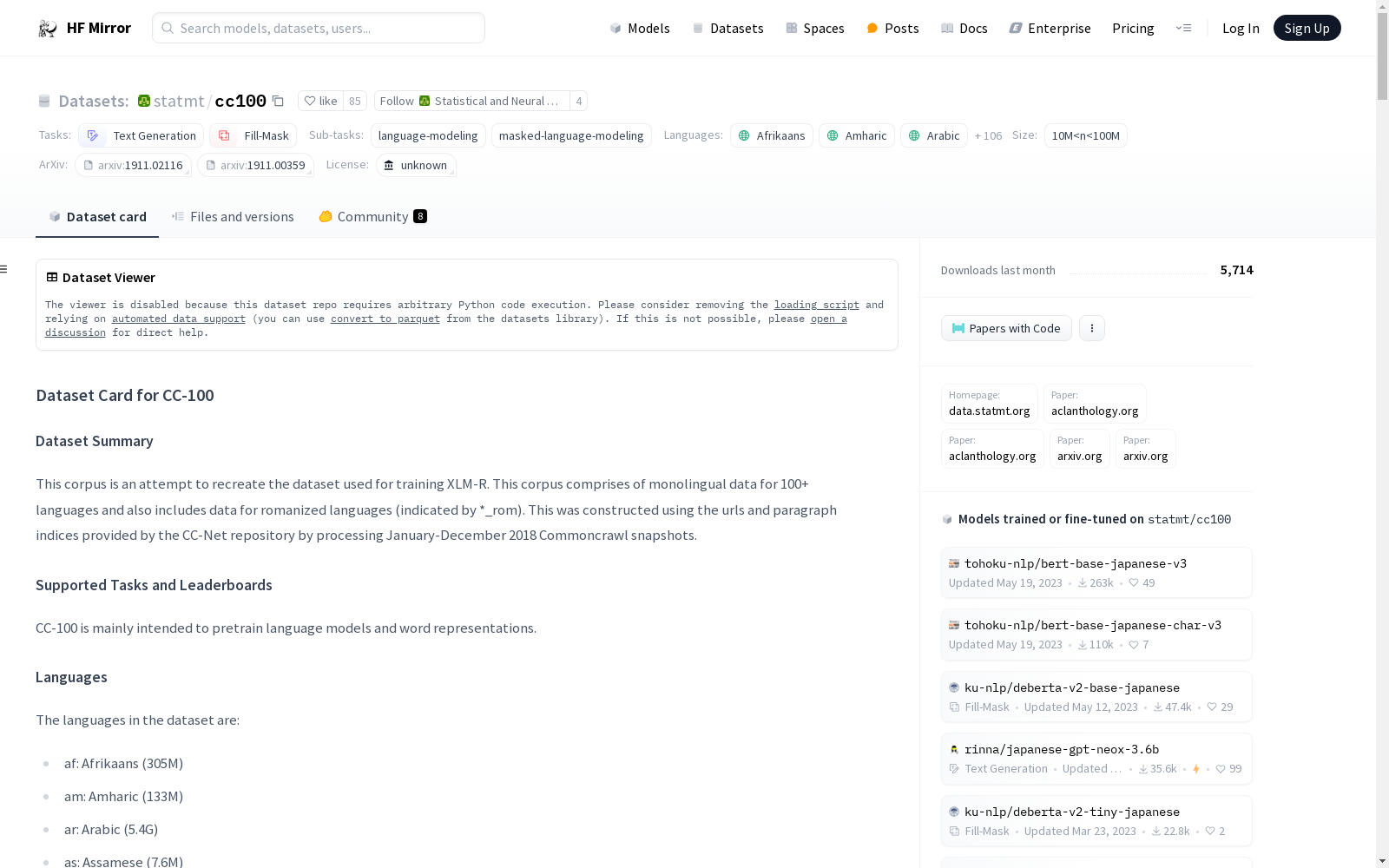

statmt/cc100

收藏数据集卡片 for CC-100

数据集描述

数据集摘要

该语料库旨在重现用于训练 XLM-R 的数据集。该语料库包含 100 多种语言的单语数据,还包括罗马化语言的数据(以 *_rom 表示)。该数据集是通过处理 2018 年 1 月至 12 月的 Commoncrawl 快照,并使用 CC-Net 仓库提供的 URL 和段落索引构建的。

支持的任务和排行榜

CC-100 主要用于预训练语言模型和词表示。

语言

数据集中的语言包括:

- af: 南非荷兰语 (305M)

- am: 阿姆哈拉语 (133M)

- ar: 阿拉伯语 (5.4G)

- as: 阿萨姆语 (7.6M)

- az: 阿塞拜疆语 (1.3G)

- be: 白俄罗斯语 (692M)

- bg: 保加利亚语 (9.3G)

- bn: 孟加拉语 (860M)

- bn_rom: 孟加拉语罗马化 (164M)

- br: 布列塔尼语 (21M)

- bs: 波斯尼亚语 (18M)

- ca: 加泰罗尼亚语 (2.4G)

- cs: 捷克语 (4.4G)

- cy: 威尔士语 (179M)

- da: 丹麦语 (12G)

- de: 德语 (18G)

- el: 希腊语 (7.4G)

- en: 英语 (82G)

- eo: 世界语 (250M)

- es: 西班牙语 (14G)

- et: 爱沙尼亚语 (1.7G)

- eu: 巴斯克语 (488M)

- fa: 波斯语 (20G)

- ff: 富拉语 (3.1M)

- fi: 芬兰语 (15G)

- fr: 法语 (14G)

- fy: 弗里斯兰语 (38M)

- ga: 爱尔兰语 (108M)

- gd: 苏格兰盖尔语 (22M)

- gl: 加利西亚语 (708M)

- gn: 瓜拉尼语 (1.5M)

- gu: 古吉拉特语 (242M)

- ha: 豪萨语 (61M)

- he: 希伯来语 (6.1G)

- hi: 印地语 (2.5G)

- hi_rom: 印地语罗马化 (129M)

- hr: 克罗地亚语 (5.7G)

- ht: 海地克里奥尔语 (9.1M)

- hu: 匈牙利语 (15G)

- hy: 亚美尼亚语 (776M)

- id: 印度尼西亚语 (36G)

- ig: 伊博语 (6.6M)

- is: 冰岛语 (779M)

- it: 意大利语 (7.8G)

- ja: 日语 (15G)

- jv: 爪哇语 (37M)

- ka: 格鲁吉亚语 (1.1G)

- kk: 哈萨克语 (889M)

- km: 高棉语 (153M)

- kn: 卡纳达语 (360M)

- ko: 韩语 (14G)

- ku: 库尔德语 (90M)

- ky: 吉尔吉斯语 (173M)

- la: 拉丁语 (609M)

- lg: 干达语 (7.3M)

- li: 林堡语 (2.2M)

- ln: 林加拉语 (2.3M)

- lo: 老挝语 (63M)

- lt: 立陶宛语 (3.4G)

- lv: 拉脱维亚语 (2.1G)

- mg: 马尔加什语 (29M)

- mk: 马其顿语 (706M)

- ml: 马拉雅拉姆语 (831M)

- mn: 蒙古语 (397M)

- mr: 马拉地语 (334M)

- ms: 马来语 (2.1G)

- my: 缅甸语 (46M)

- my_zaw: 缅甸语 (Zawgyi) (178M)

- ne: 尼泊尔语 (393M)

- nl: 荷兰语 (7.9G)

- no: 挪威语 (13G)

- ns: 北索托语 (1.8M)

- om: 奥罗莫语 (11M)

- or: 奥里亚语 (56M)

- pa: 旁遮普语 (90M)

- pl: 波兰语 (12G)

- ps: 普什图语 (107M)

- pt: 葡萄牙语 (13G)

- qu: 克丘亚语 (1.5M)

- rm: 罗曼什语 (4.8M)

- ro: 罗马尼亚语 (16G)

- ru: 俄语 (46G)

- sa: 梵语 (44M)

- sc: 撒丁语 (143K)

- sd: 信德语 (67M)

- si: 僧伽罗语 (452M)

- sk: 斯洛伐克语 (6.1G)

- sl: 斯洛文尼亚语 (2.8G)

- so: 索马里语 (78M)

- sq: 阿尔巴尼亚语 (1.3G)

- sr: 塞尔维亚语 (1.5G)

- ss: 斯瓦蒂语 (86K)

- su: 巽他语 (15M)

- sv: 瑞典语 (21G)

- sw: 斯瓦希里语 (332M)

- ta: 泰米尔语 (1.3G)

- ta_rom: 泰米尔语罗马化 (68M)

- te: 泰卢固语 (536M)

- te_rom: 泰卢固语罗马化 (79M)

- th: 泰语 (8.7G)

- tl: 他加禄语 (701M)

- tn: 茨瓦纳语 (8.0M)

- tr: 土耳其语 (5.4G)

- ug: 维吾尔语 (46M)

- uk: 乌克兰语 (14G)

- ur: 乌尔都语 (884M)

- ur_rom: 乌尔都语罗马化 (141M)

- uz: 乌兹别克语 (155M)

- vi: 越南语 (28G)

- wo: 沃洛夫语 (3.6M)

- xh: 科萨语 (25M)

- yi: 意第绪语 (51M)

- yo: 约鲁巴语 (1.1M)

- zh-Hans: 简体中文 (14G)

- zh-Hant: 繁体中文 (5.3G)

- zu: 祖鲁语 (4.3M)

数据集结构

数据实例

am 配置的一个示例:

{id: 0, text: ተለዋዋጭ የግድግዳ አንግል ሙቅ አንቀሳቅሷል ቲ-አሞሌ አጥቅሼ ... }

每个数据点是一个文本段落。段落按原始(未打乱)顺序呈现。文档之间由一个包含单个换行符的数据点分隔。

数据字段

数据字段包括:

- id: 示例的 id

- text: 内容为字符串

数据分割

某些配置的大小:

| 名称 | 训练集大小 |

|---|---|

| am | 3124561 |

| sr | 35747957 |

数据集创建

数据来源

数据来自多种语言的网页。

注释

数据集不包含任何额外注释。

个人和敏感信息

由于数据集来自 Common Crawl,可能包含个人和敏感信息。在使用 CC-100 训练深度学习模型时,特别是文本生成模型,必须考虑这一点。

使用数据的注意事项

数据集的社会影响

[更多信息需补充]

偏见的讨论

[更多信息需补充]

其他已知限制

[更多信息需补充]

附加信息

数据集策展人

该数据集由爱丁堡大学统计机器翻译团队使用 Facebook Research 的 CC-Net 工具包准备。

许可信息

爱丁堡大学统计机器翻译团队不主张对语料库的准备工作拥有知识产权。使用该数据集时,您还必须遵守 Common Crawl 的使用条款。

引用信息

如果您发现该语料库中的资源有用,请引用以下内容:

bibtex @inproceedings{conneau-etal-2020-unsupervised, title = "Unsupervised Cross-lingual Representation Learning at Scale", author = "Conneau, Alexis and Khandelwal, Kartikay and Goyal, Naman and Chaudhary, Vishrav and Wenzek, Guillaume and Guzm{a}n, Francisco and Grave, Edouard and Ott, Myle and Zettlemoyer, Luke and Stoyanov, Veselin", editor = "Jurafsky, Dan and Chai, Joyce and Schluter, Natalie and Tetreault, Joel", booktitle = "Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics", month = jul, year = "2020", address = "Online", publisher = "Association for Computational Linguistics", url = "https://aclanthology.org/2020.acl-main.747", doi = "10.18653/v1/2020.acl-main.747", pages = "8440--8451", abstract = "This paper shows that pretraining multilingual language models at scale leads to significant performance gains for a wide range of cross-lingual transfer tasks. We train a Transformer-based masked language model on one hundred languages, using more than two terabytes of filtered CommonCrawl data. Our model, dubbed XLM-R, significantly outperforms multilingual BERT (mBERT) on a variety of cross-lingual benchmarks, including +14.6{%} average accuracy on XNLI, +13{%} average F1 score on MLQA, and +2.4{%} F1 score on NER. XLM-R performs particularly well on low-resource languages, improving 15.7{%} in XNLI accuracy for Swahili and 11.4{%} for Urdu over previous XLM models. We also present a detailed empirical analysis of the key factors that are required to achieve these gains, including the trade-offs between (1) positive transfer and capacity dilution and (2) the performance of high and low resource languages at scale. Finally, we show, for the first time, the possibility of multilingual modeling without sacrificing per-language performance; XLM-R is very competitive with strong monolingual models on the GLUE and XNLI benchmarks. We will make our code and models publicly available.", }

bibtex @inproceedings{wenzek-etal-2020-ccnet, title = "{CCN}et: Extracting High Quality Monolingual Datasets from Web Crawl Data", author = "Wenzek, Guillaume and Lachaux, Marie-Anne and Conneau, Alexis and Chaudhary, Vishrav and Guzm{a}n, Francisco and Joulin, Armand and Grave, Edouard", editor = "Calzolari, Nicoletta and B{e}chet, Fr{e}d{e}ric and Blache, Philippe and Choukri, Khalid and Cieri, Christopher and Declerck, Thierry and Goggi, Sara and Isahara, Hitoshi and Maegaard, Bente and Mariani, Joseph and Mazo, H{e}l{`e}ne and Moreno, Asuncion and Odijk, Jan and Piperidis, Stelios", booktitle = "Proceedings of the Twelfth Language Resources and Evaluation Conference", month = may, year = "2020", address = "Marseille, France", publisher = "European Language Resources Association", url = "https://aclanthology.org/2020.lrec-1.494", pages = "4003--4012", abstract = "Pre-training text representations have led to significant improvements in many areas of natural language processing. The quality of these models benefits greatly from the size of the pretraining corpora as long as its quality is preserved. In this paper, we describe an automatic pipeline to extract massive high-quality monolingual datasets from Common Crawl for a variety of languages. Our pipeline follows the data processing introduced in fastText (Mikolov et al., 2017; Grave et al., 2018), that deduplicates documents and identifies their language. We augment this pipeline with a filtering step to select documents that are close to high quality corpora like Wikipedia.", language = "English", ISBN = "979-10-95546-34-4", }

贡献

感谢 @abhishekkrthakur 添加此数据集。